9 Customer Sentiment Examples: From App Reviews to Socials

Table of Content:

You're here because someone on your team just asked "So... what do we do with sentiment analysis?" and you realized the training deck doesn't answer that. They get why it matters. What they don't get is

- whether "The update fixed my issue but holy hell that took forever" counts as positive,

- how to handle a five-star review that's dripping with sarcasm,

- or what emotion tag to slap on a chat transcript where the customer goes from furious to grateful in four messages.

Each of the channels has its own specificity of customer sentiment analysis… For example, analyzing sentiment from an App Store review versus a Reddit community thread requires completely different approaches.

- App Store reviews give you structured star ratings paired with text, making patterns easier to spot.

- Reddit discussions sprawl across dozens of comments, mix sarcasm with praise, and bury sentiment three replies deep.

Spoiler: social media comments and support tickets sentiment analysis also has its nuances.

Lucija Knezic, our Senior CSM, and I grabbed the messiest, most real-world customer sentiment examples we could find across support tickets, social mentions, NPS responses, and transcripts. In this article, we're answering the questions your team is asking:

- What separates neutral from negative when the words sound identical?

- Which emotions matter enough to track separately?

- When does a sentiment tag trigger a workflow versus just sitting in a dashboard?

Key insights

- Sentiment is never simply “positive” or “negative.” It’s a signal with a job attached. A five-star review can still be a churn risk if it contains a blocker. A one-star rant can be useless noise unless it repeats as a pattern. So the move is to tag sentiment and intent. Bug, pricing friction, onboarding confusion, feature request, trust issue. That’s how you stop arguing and start prioritizing.

- Context changes everything. App Store reviews are structured and permanent, so mismatches between stars and text matter a lot. Social is fast and messy, and the risk is speed plus visibility. Support tickets are about the emotional arc, because customers often start angry and end satisfied if you fix it quickly. Reddit and forums are slow-burn sentiment, and the “truth” is in the thread, not the first post.

- Group analysis beats hero work. You don’t get insights by reading three loud messages. You get them by clustering 100 similar ones and watching the trend. That’s where you catch the real drivers, like “Doesn’t Work” suddenly becoming 20% of conversation after a release, or refund requests dragging sentiment even when pricing comments look fine overall.

- Emotion labels are only useful if they trigger action. “Confused” should route to onboarding or help content. “Anxious” belongs to trust, security messaging, and clearer policies. “Angry + urgent” is an SLA and escalation issue, not a copy issue.

- Finally, close the loop with receipts. Ship the fix, then check if the tag share drops and sentiment climbs. If nothing moves, assume the solution didn’t land, wasn’t discoverable, or solved only half the problem.

Customer Sentiment Analysis Examples by Channel

Every channel your customers use to talk about you requires a different lens for sentiment analysis. Not because the emotions are different, but because the context, structure, and intent behind each message type shifts dramatically.

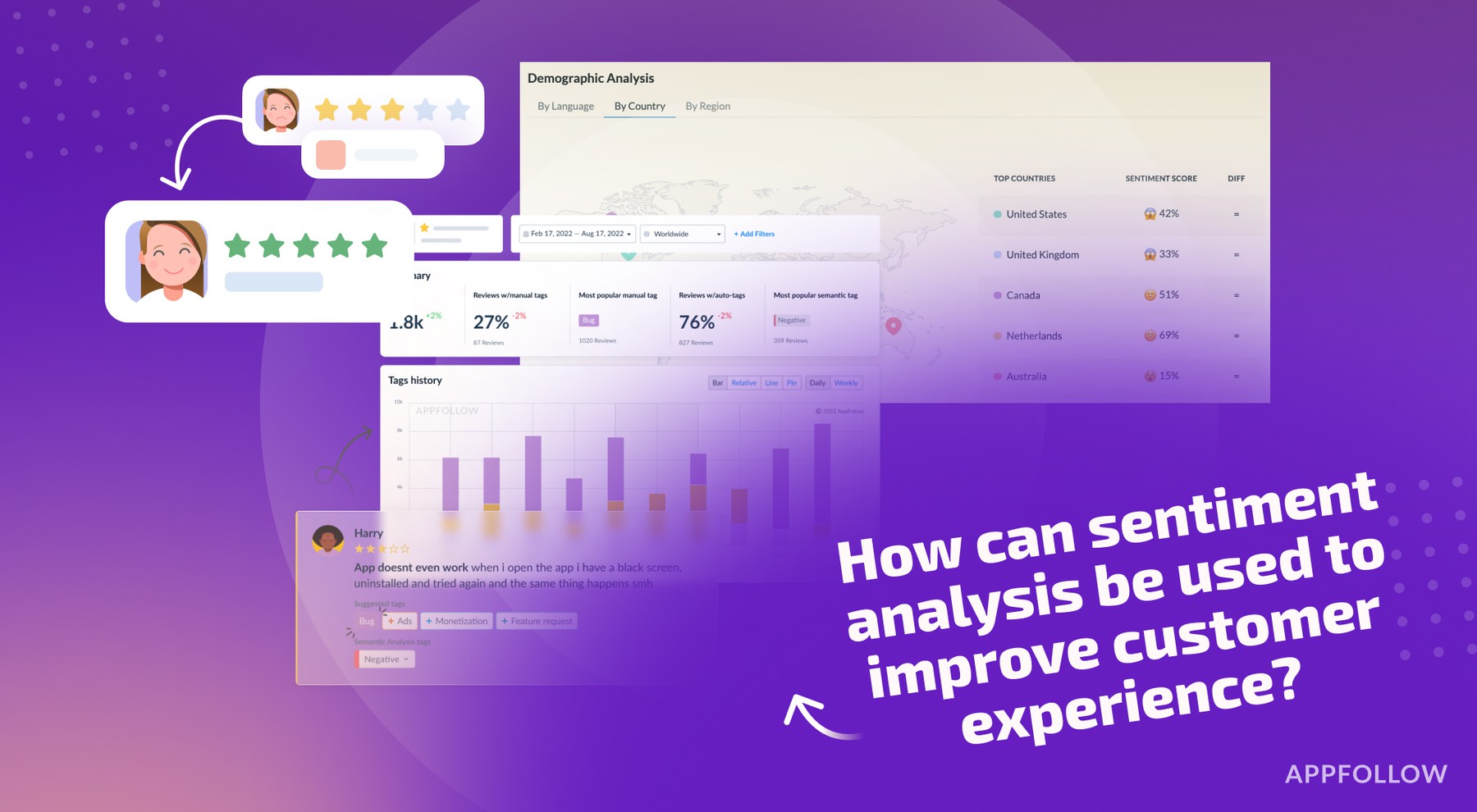

- App stores reviews come with built-in sentiment signals. A star rating gives you the headline before you read a word. Someone drops a 1-star review saying "Love this app, just wish it had dark mode"? The rating tells you they're frustrated enough to punish you publicly, even if the words sound mild. You're analyzing sentiment against expectation here.

The gap between what they said and what they rated reveals the intensity. App store sentiment also lives forever and influences download decisions, which means a negative review from 2023 still damages you in 2026 if you never responded. - Social media sentiment hides in threads, replies, quote tweets, and comment sections where the original post might say one thing but the real story unfolds six comments deep. Social moves fast, sentiment shifts within hours, and you're often analyzing incomplete conversations because people DM the resolution.

- Customer support tickets give you the richest sentiment data because you see the entire emotional journey in one place. The opening message usually carries peak frustration: "This is the third time I'm reaching out about this." By message four, they might write "Thanks for staying with me on this," which reads positive but you need to tag the overall ticket sentiment based on resolution, not just the final reply.

- Forums and communities operate like ongoing focus groups where sentiment about a feature might span a 50-comment thread across three weeks. Someone posts "The new dashboard is confusing" and gets 23 upvotes, then another user replies with a workaround that gets 15 upvotes and a "This should be built-in" response. You're not analyzing one person's sentiment anymore. The collective agreement signals product gaps louder than individual complaints.

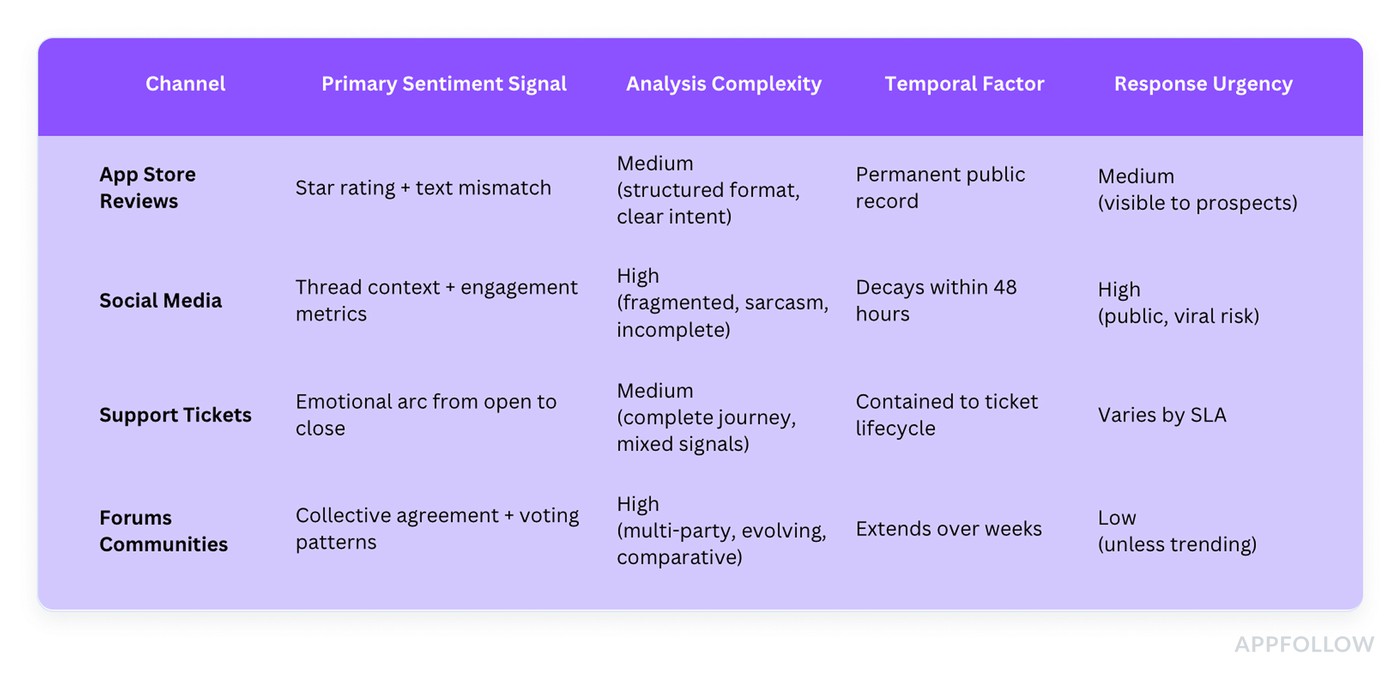

Here's how the analysis approach differs across channels:

The biggest mistake teams make with customer service sentiment analysis examples is applying the same tagging logic everywhere. A "thanks" in a support ticket after you resolved their issue? Positive. That same "thanks" as a sarcastic reply to your Twitter apology about a 6-hour outage? Negative, and you better recognize it as such before you add it to your positive sentiment dashboard and embarrass yourself in the next QBR.

Each channel below gets real examples showing exactly what positive, negative, neutral, and mixed sentiment looks like ⬇

App Store reviews sentiment analysis examples

App Store reviews are the fastest place to practice sentiment labeling because users tell you the verdict up front, then they drop the “why” in one or two lines. Still, it gets tricky. A 5-star review can hide a real bug. A 2-star rant can include a perfect feature request you should route straight to Product.

The snippets below are real-world patterns pulled from AppFollow clients, rewritten only to remove identifying details. Treat them like plug-and-play customer sentiment examples: what “positive,” “neutral,” and “negative” looks like when it’s wrapped in sarcasm, mixed feelings, or urgency. Use the labels to drive action, not vibes. Tag the emotion, tag the topic, assign an owner, and you’ve got a workflow instead of a pile of stars.

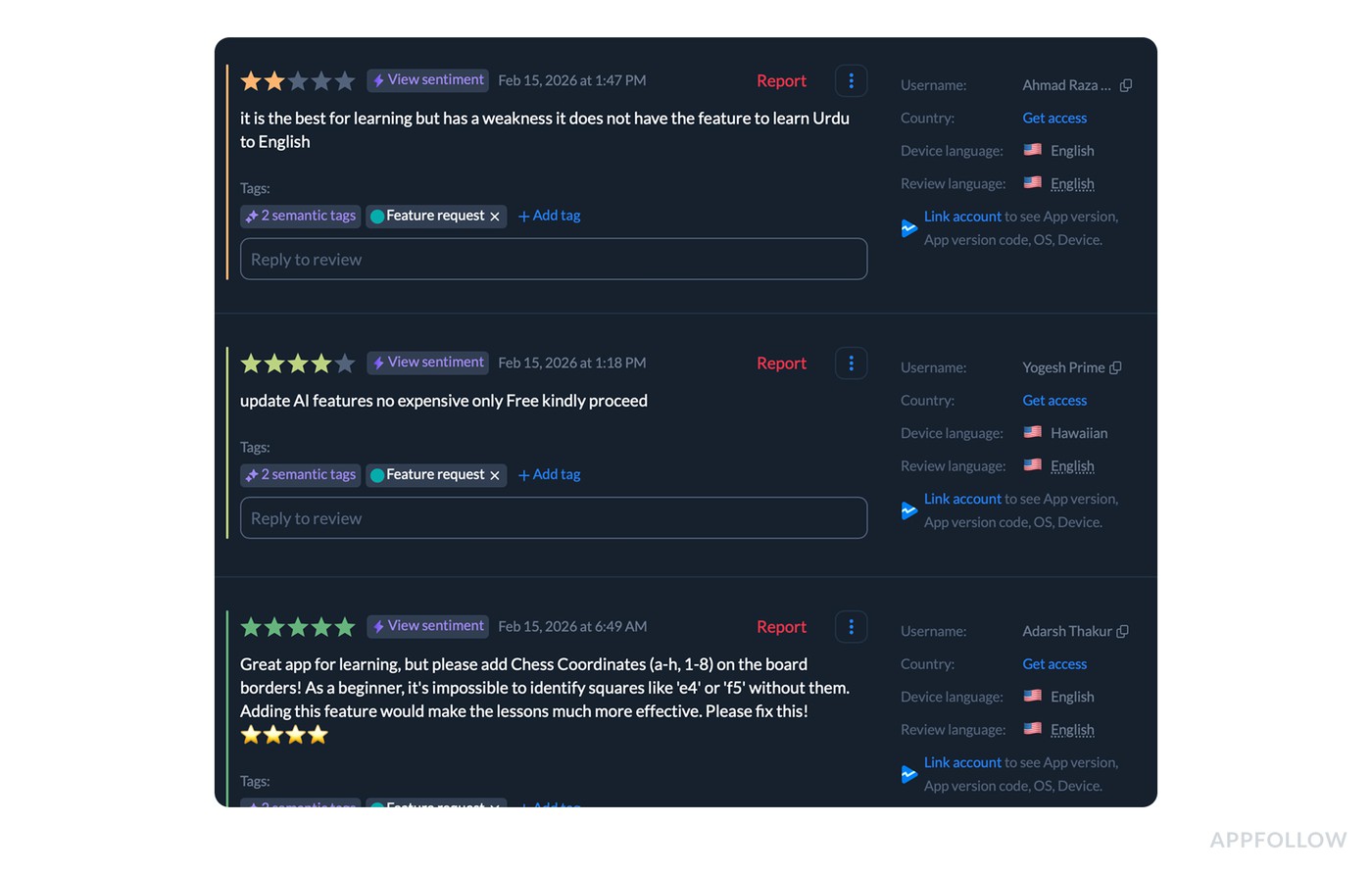

Example 1: Feature Request Detection

Feature requests are where sentiment gets weird in the best way. People can be genuinely happy and still tell you what’s missing, and they can also be annoyed, but the request itself is clear. So instead of treating reviews like three separate opinions, teams tag them as Feature request and analyze the cluster as one signal stream.

Here’s a tight sample of real-world, cleaned-up reviews that sit under that tag:

- “This app is great for learning, but it doesn’t have an option to learn Urdu to English. Please add Urdu-to-English support.”

- “Please update the AI features and keep them free. The paid options are too expensive.”

- “Great app for learning, but please add chess coordinates (a–h, 1–8) on the board edges. As a beginner, it’s hard to identify squares like ‘e4’ or ‘f5’ without them.”

Now the dashboard part, because that’s where this becomes analysis and not storytelling.

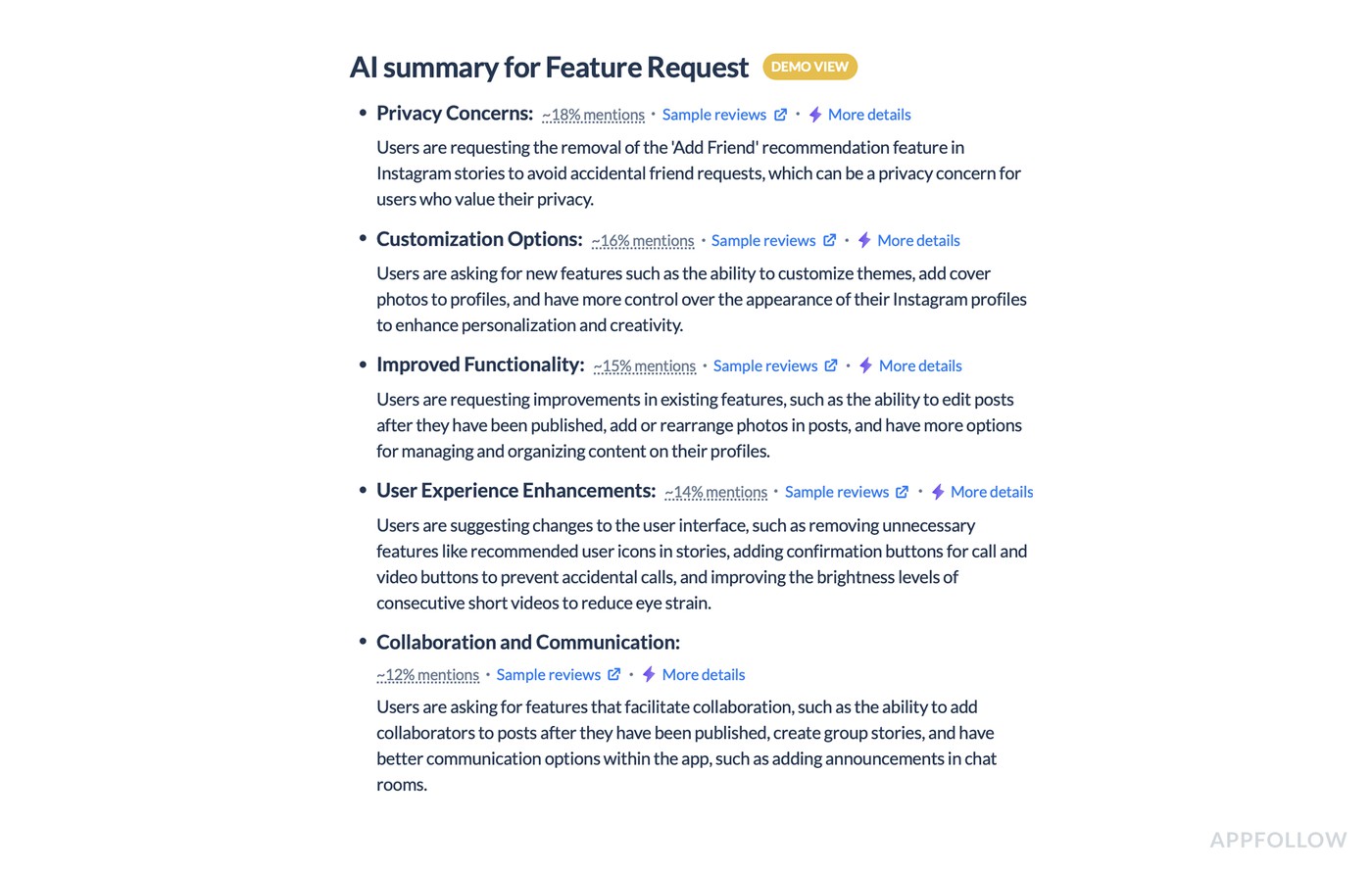

Teams open the Feature request tag inside the sentiment dashboard and look at it like a mini dataset.

- First question is volume. How big is this tag compared to everything else.

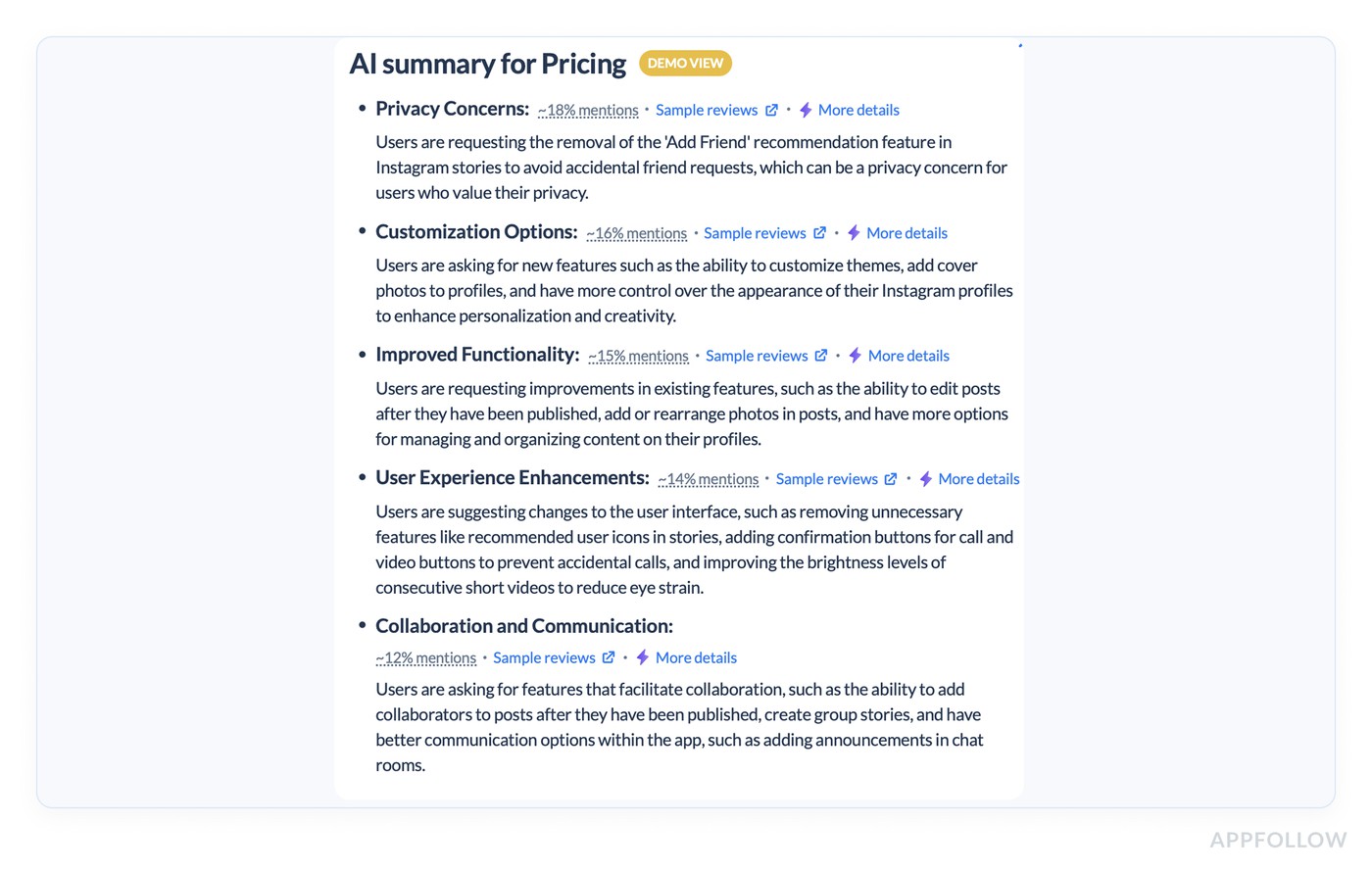

- In the tag summary view, you get sub-themes and their share of mentions, so you’re not guessing what’s inside the bucket. Privacy concerns can take ~18% of mentions, customization ~16%, improved functionality ~15%, UX enhancements ~14%. That breakdown tells you what “Feature request” means for your app right now, in weighted categories, not vibes.

- Then they read the sentiment shape of the tag. Feature requests often show up in high-star reviews because loyal users are asking for improvements, not rage-posting. That’s an important nuance for CX and Product. A five-star review with a missing feature request is still a retention risk if the same theme repeats week after week.

On the flip side, if the tag is dominated by low-star reviews, the missing feature is probably blocking a core workflow and dragging rating perception. - Next comes trend analysis. They track how sentiment and volume move over time for the Feature request tag specifically. If it spikes right after a release, that’s usually friction introduced by change. If it steadily climbs, the product is maturing and users are bumping into “advanced needs” like export, integrations, or language coverage.

- Segmentation is where you stop arguing internally. Teams slice the Feature request tag by language and country to see whether a request is global or market-specific. Urdu-to-English support, for example, is not “a random request.” It’s a clear localization demand you can quantify by region. Pricing-related requests tend to cluster by market, too, and that gives Marketing and Product a shared view of where monetization messaging or tiering is creating friction.

What do they do with these insights?

- Product turns the top sub-themes into backlog items with receipts: frequency, trendline, and the exact phrasing users repeat.

- Support aligns response macros by theme, so replies are consistent, and expectations don’t get overpromised.

- Marketing uses the same tag view after shipping changes to validate impact. If the request volume drops and sentiment stabilizes, the update solved something real. If nothing moves, the feature might exist, but users can’t find it, or it only fixes part of the job.

That’s the point of doing customer sentiment analysis on feature requests - to measure which missing capabilities are shaping user emotion, ratings, and retention, then close the loop with proof.

Example 2: Bug Impact Analysis

Here’s how AppFollow users run bug impact analysis when the App Store turns into a live incident channel.

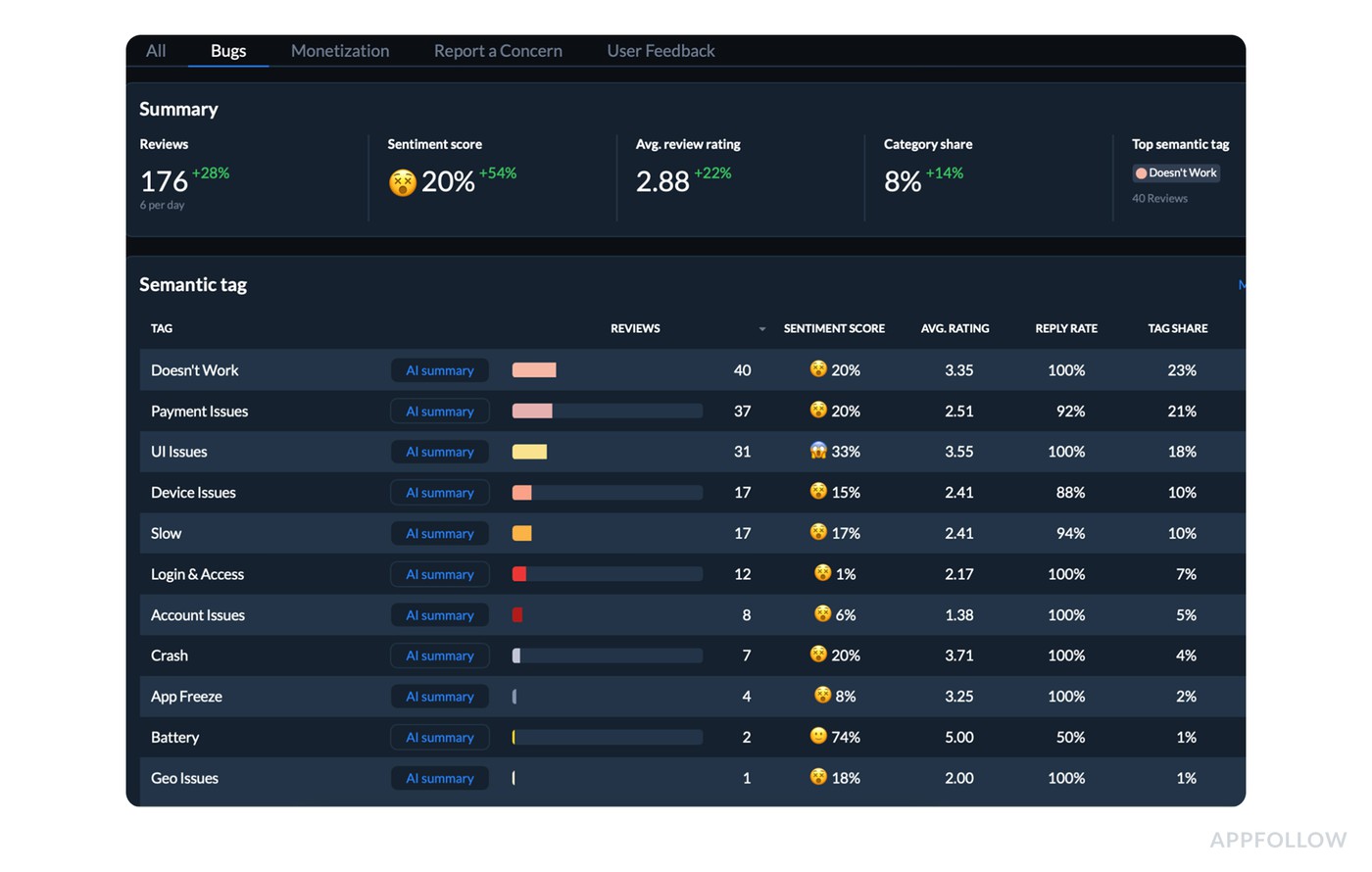

A release goes out. Reviews jump to 176 in the window shown, up 28%. Sentiment tanks to 20% (up 54% only because the baseline was even worse), and the average rating sits at 2.88. That’s not “customers are grumpy.” That’s a measurable hit to acquisition and retention.

So the team opens the semantic tag table and asks one question: what’s driving the drop right now? The answer is visible fast.

The top tag is Doesn’t Work with 40 reviews, and it’s a big chunk of the conversation at 23% tag share. Inside that tag, the metrics stay ugly: 20% sentiment score, 3.35 average rating, 100% reply rate. Next to it, Payment Issues is also heavy at 37 reviews and 21% tag share, while UI Issues trails with 31 reviews and 18% tag share.

That comparison matters because it tells you whether you’re dealing with one dominant failure mode or three separate fires.

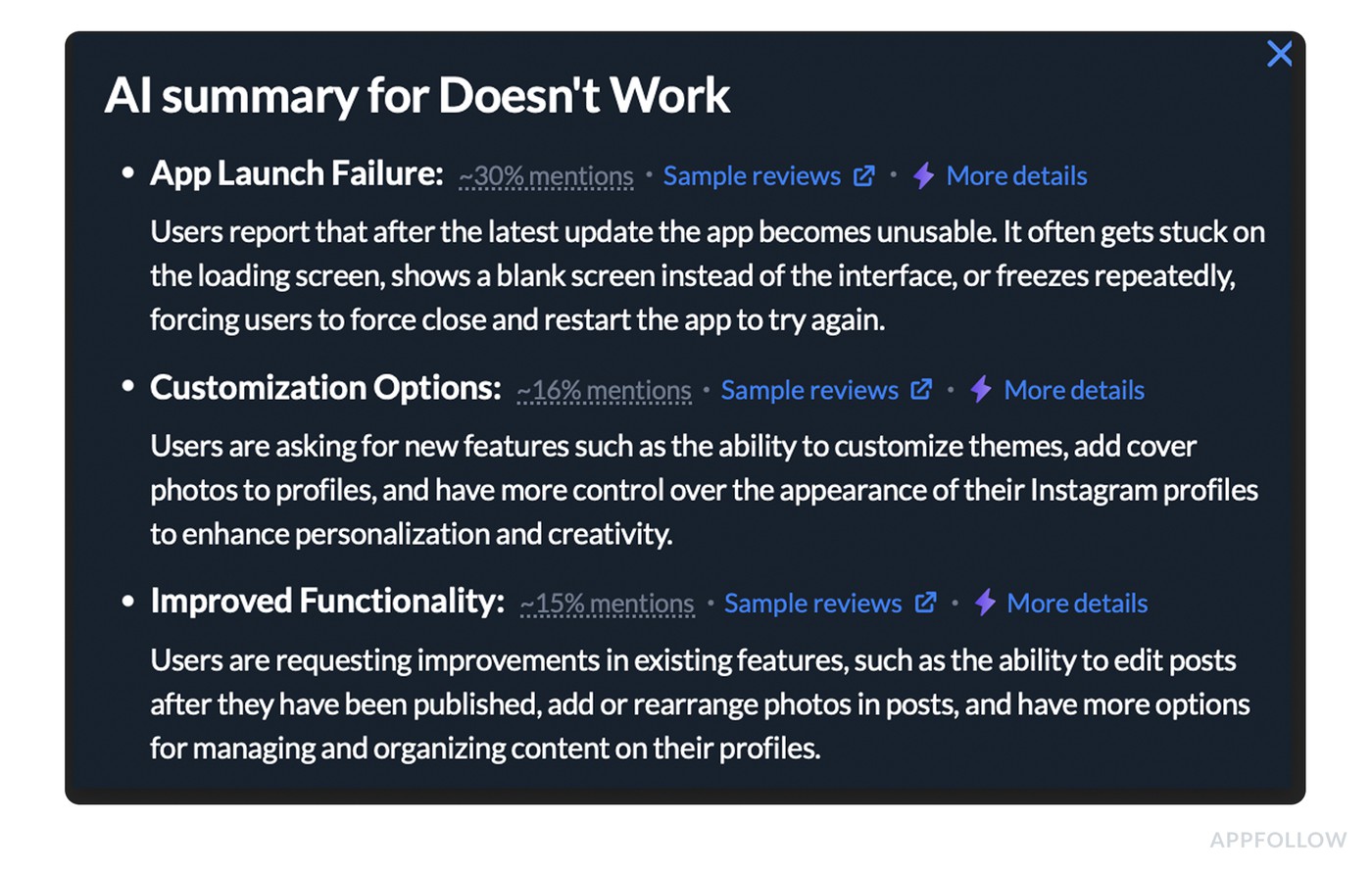

Then they open the “Doesn’t Work” summary and scan it for the issues with the highest % of mentions.

Now the move that makes this useful for CX, Product, and Marketing at the same time: a tight incident brief built from the dashboard.

What goes into that brief:

- Blast radius: 40 reviews tied to “Doesn’t Work,” plus its 23% share of all tagged issues in this period.

- Business impact: average rating 2.88 with sentiment at 20%, which is a conversion killer in the store.

- Priority context: “Doesn’t Work” outranks every other tag by volume, so this is the first fix that will move the needle.

Support gets a parallel play. Reply rate is already high, so they standardize the response to do two things: acknowledge the break, and collect the missing repro details that matter for triage (OS, device, app version). That keeps replies consistent and turns review responses into structured debugging input instead of apologies.

After the hotfix ships, the team goes back to the same dashboard view. They expect two changes on the sentiment graph: the overall sentiment trendline starts climbing, and the Doesn’t Work tag share starts shrinking as fewer new reviews get pulled into that cluster.

If the line doesn’t move, they don’t argue about feelings. They assume the fix didn’t reach everyone, the bug isn’t fully resolved, or a second failure mode is still active. That’s the loop.

This is why CX leads like it, why PMs trust it, and why marketing stops guessing what to say in release notes. The dashboard tells you what broke, how widespread it is, and whether your fix changed the story customers are telling.

Example 3: Pricing Sentiment

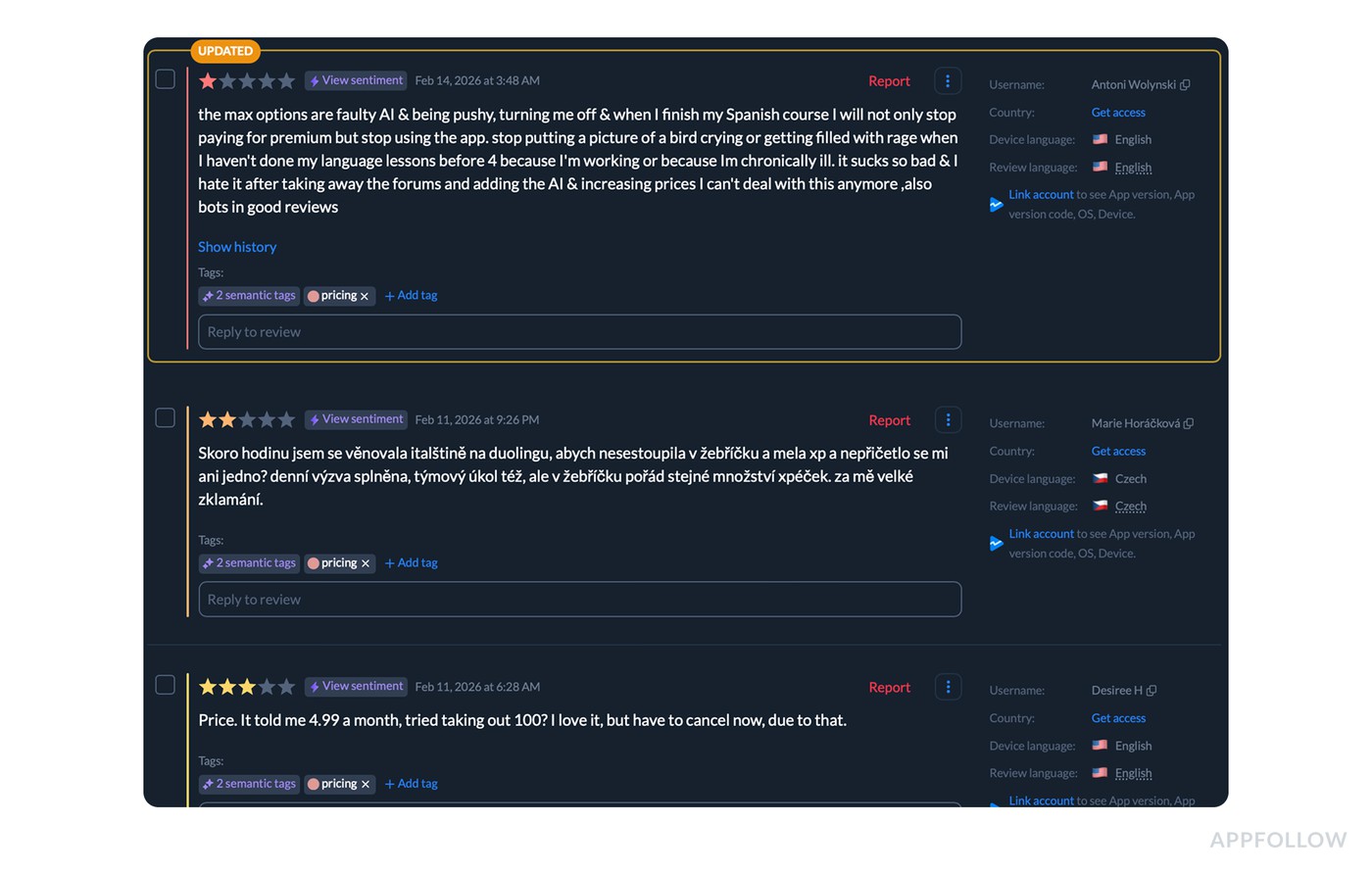

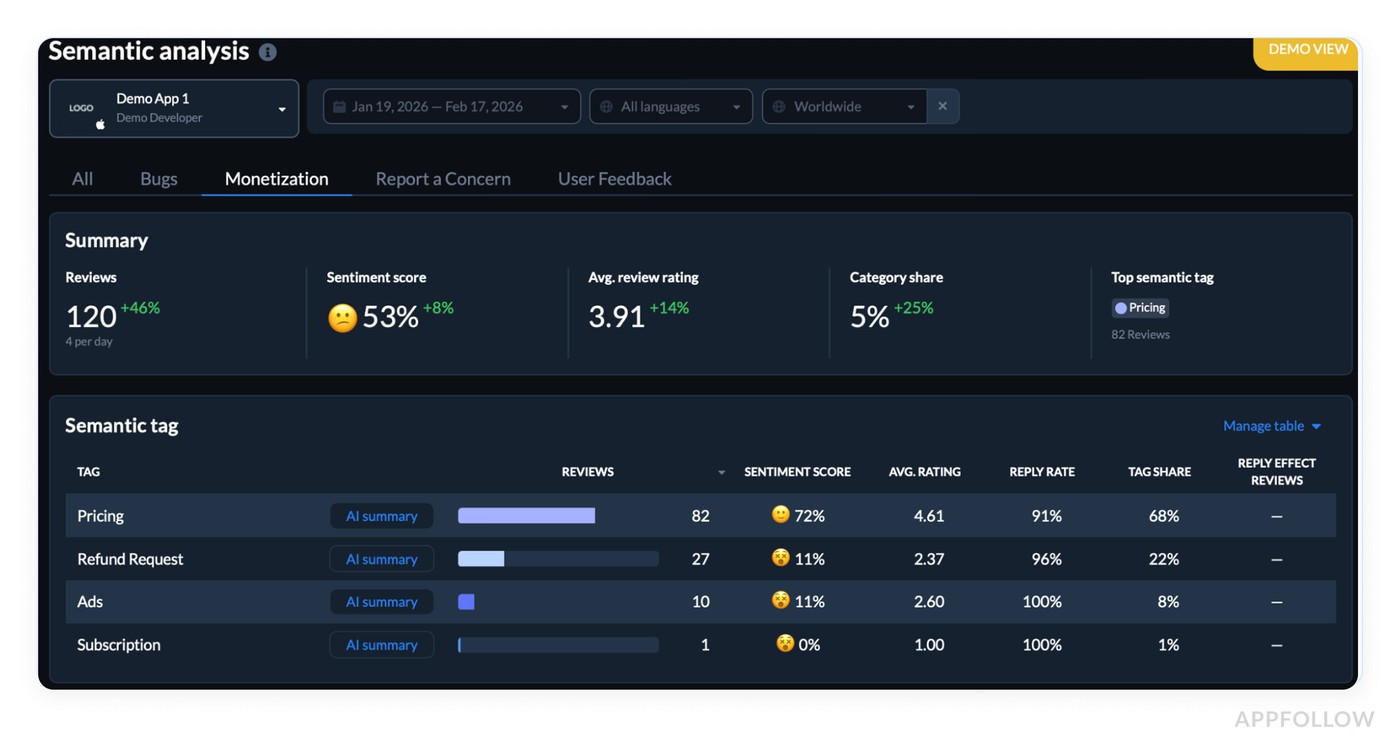

Pricing feedback is usually trust, fairness, and surprise rolled into one. In this case, AppFollow users pulled a pricing cluster that was big enough to matter and messy enough to lie if you read it manually.

They started in the Monetization view and saw the headline numbers for the selected window: 120 reviews (about 4/day, +46%), sentiment score 53% (+8%), avg rating 3.91 (+14%), and category share 5% (+25%).

Appfollow customer sentiment dashboard. Test it live.

The top semantic tag was Pricing with 82 reviews. (You told me ~40% were in other languages, which is exactly where tagging helps because the theme stays consistent even when wording changes.)

A few real review lines from the cluster (cleaned, anonymized):

- “You’re actively hiding your prices online and in the app. That’s scummy and scammy.”

- “Money-hungry. You can’t finish lessons with the standard energy. Gems are overpriced.”

- “Prices increased, AI feels pushy, and it’s turning me off.”

Here’s the part people miss. The dashboard shows Pricing is not automatically “negative.” In this dataset it sits at 72% sentiment with a 4.61 avg rating and 91% reply rate, plus it accounts for 68% of Monetization-tagged feedback.

Meanwhile, the real pain is concentrated in adjacent tags: Refund Request has 27 reviews with 11% sentiment and 2.37 avg rating (reply rate 96%), and Ads has 10 reviews with 11% sentiment and 2.60 avg rating (reply rate 100%).

That contrast is the insight. Pricing mentions include plenty of neutral or even positive “worth it” talk, while refunds and ads are the emotional sinkholes.

Process looked like this:

- They filtered to Monetization, then compared tags side by side to isolate what’s damaging sentiment.

- Next, they segmented the Pricing cluster by language and market to see whether “hidden prices” was global while “energy paywall” spiked in specific regions.

- Only after that did they standardize responses. Refund-related reviews got a tight billing flow and next-step instructions. Pricing transparency complaints got a single clear explanation of where pricing lives and why. Product got a ranked list of recurring friction themes, phrased in user language, with the tag volumes as receipts.

That’s a customer sentiment analysis example that leads to action. The win condition is visible in the trendline: after changes ship, teams watch whether Refund Request share shrinks, Pricing stays stable or improves, and the overall sentiment score keeps climbing instead of snapping back.

Example 4: Onboarding Experience in different countries

When onboarding breaks, it doesn’t always break evenly. One market screams “can’t pay.” Another says “won’t open.” If you average it all together, you’ll miss the point and ship the wrong fix.

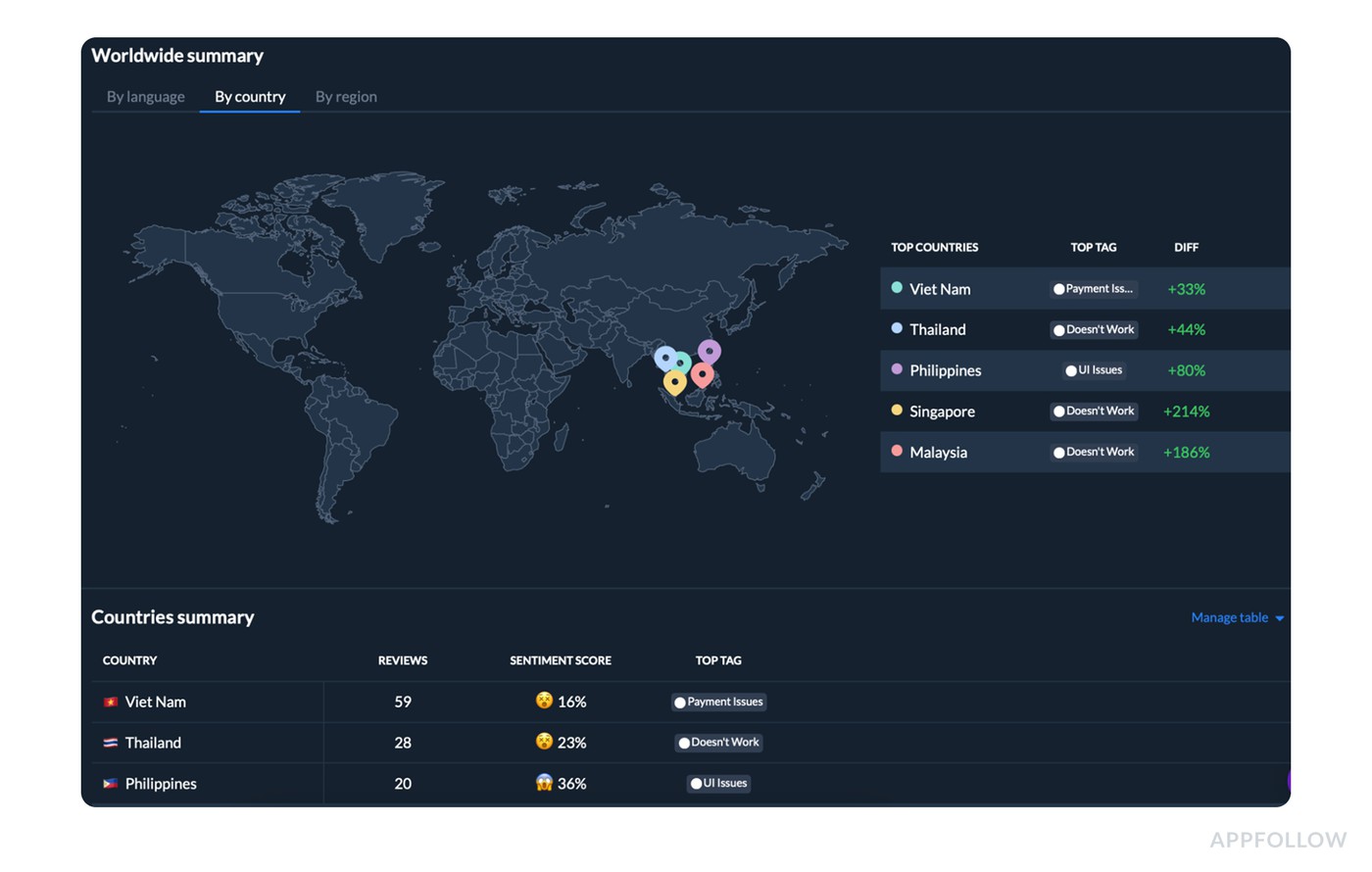

So AppFollow users start by filtering reviews to the Onboarding category, then open the Worldwide summary → By country view to see where the first-time experience is failing hardest.

Appfollow customer sentiment dashboard by countries. Test it live.

- Viet Nam leads with 59 reviews and a 16% sentiment score, with Payment Issues as the top tag.

- Thailand follows with 28 reviews, 23% sentiment, and Doesn’t Work as the top tag.

- The Philippines shows 20 reviews, 36% sentiment, and UI Issues on top.

On the “what changed” side, the spikes are loud:

- Singapore has Doesn’t Work +214%,

- Malaysia +186%,

- Philippines UI Issues +80%,

- Thailand Doesn’t Work +44%,

- Viet Nam Payment Issues +33%.

That’s the dashboard version of a summary: users are failing to get started, but the “why” differs by country. In Viet Nam it looks like payment friction during setup. In Thailand, Singapore, and Malaysia it looks like app stability or load failures early in the flow. In the Philippines, onboarding pain reads like interface confusion and control issues.

Here’s a customer sentiment analysis example that teams use: they don’t pull 100 reviews into a spreadsheet. They take the top country + top tag combo, click into the cluster, and confirm the repeating failure mode in minutes.

Then the process turns into execution:

- Product gets a country-tagged incident brief, not a vague “onboarding is bad.”

- Support updates reply macros by tag to collect the missing triage details (payment provider, device, OS, version) and to stop sending generic apologies.

- Marketing rewrites the store listing or paywall copy only in the markets where payment confusion is driving the sentiment.

After shipping fixes, they come back to the same view and look for two signals: sentiment lifts in the affected countries, and the top onboarding tag stops spiking. If Singapore’s “Doesn’t Work” doesn’t drop after the hotfix, the release didn’t land, or the root cause is still active.

cta_free_trial_purple

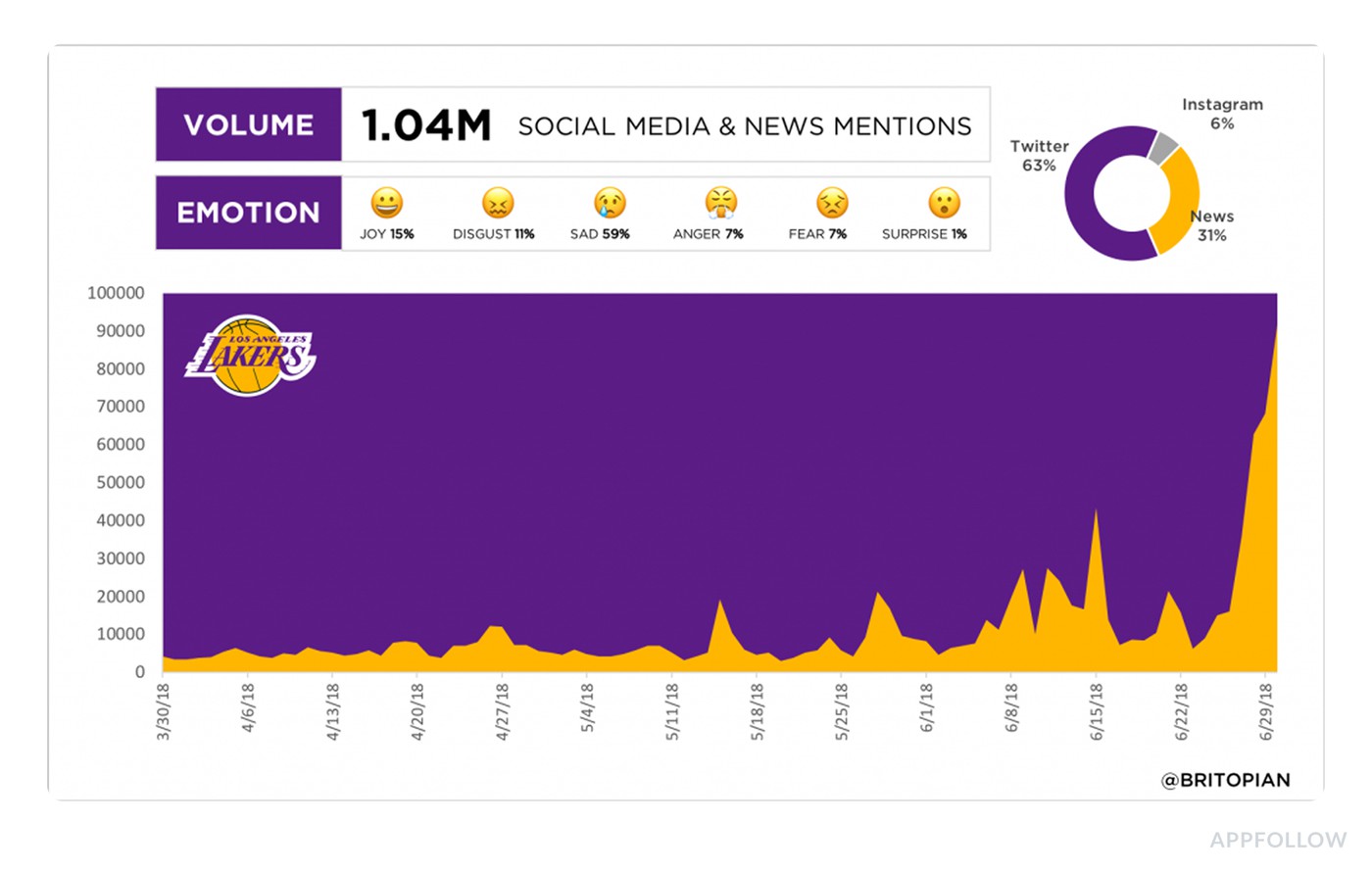

Social Media customer sentiment analysis examples

Social media is where sentiment gets loud, fast, and quite useful. People don’t write polite bug reports on X or TikTok comments. They post a screen recording with “why is this happening” energy, or they drop a one-liner that sounds positive until you catch the sarcasm. That’s why the analysis matters more than the quote.

In this section, we’re pulling the brightest real-world customer sentiment analysis examples from social mentions and breaking down how teams read them at scale. You’ll see how to separate hype from true product love, how to spot churn risk hiding inside “lol,” and how to tag emotion in a way that leads to action.

Not “negative.” More like frustration plus urgency, or delight plus a feature request.

The goal is simple. Turn social noise into a clean workflow: cluster by theme, track shifts over time, route the right issues to Support vs. Product, then measure whether the next release changes what people say.

Example 5: Customer service on X

On X, brand mentions look like a random feed until you run them through a listening tool. Then it becomes a queue with patterns.

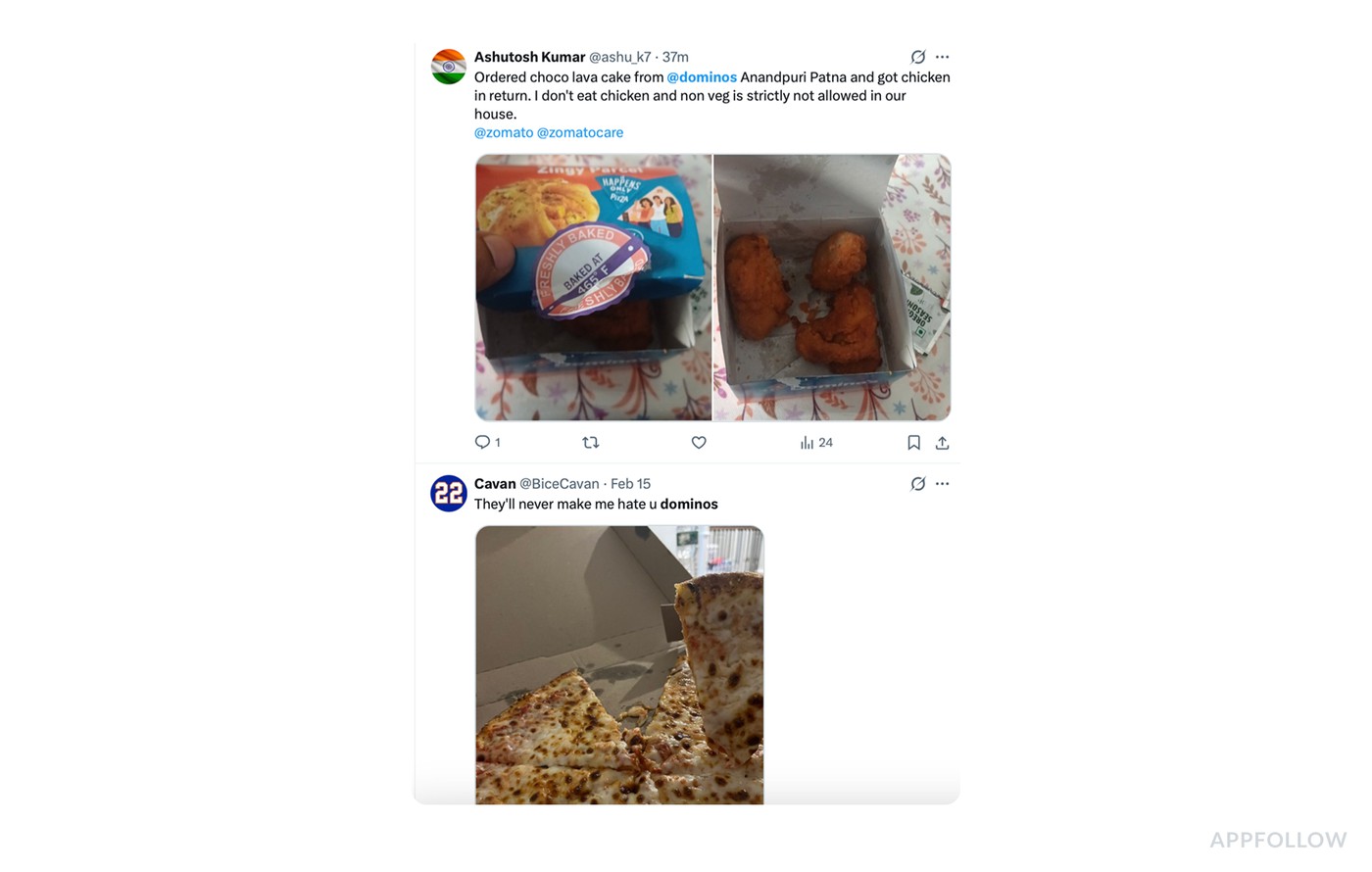

For example, here is the variety of feedback you see on X about Dominos Pizza:

- One post is pure love: “me running to the door when Dominos arrives.”

- Another is a loyalty flex: “They’ll never make me hate u.”

- Then you get the sharp stuff. A serious complaint about an order mix-up, plus dietary restrictions and multiple tags to escalate.

- Sprinkle in jokes about hiring agents, a vague “Dominos are falling,” and a pineapple meme reply.

If you try to handle this manually, you’ll spend your day reacting to the loudest tweet and miss what’s trending.

So support teams use social listening + CX tooling to do this at scale. The system does three jobs that humans can’t do fast enough:

- It collects every mention in one stream, across accounts and keywords.

- It classifies posts by intent and sentiment, usually with rules plus NLP: complaint vs praise vs question vs joke.

- It tracks trends over time, so you see spikes by topic, not just individual posts.

That’s where the analysis starts. The team filters to the “complaint” cluster and spots the high-risk thread: wrong item delivered, non-veg in a household that forbids it, public escalation by tagging delivery partners.

Example of the Brandwatch dashboard. Image source.

The tool surfaces it because negative sentiment is spiking and the post includes keywords tied to safety and trust. It gets routed to a human fast, often as a ticket with priority rules.

Then the workflow becomes measurable. Support replies publicly within the tool, moves to DMs for order details, fixes it, and posts a resolution update. The platform keeps monitoring the thread and the wider “order issue” topic. You literally see the curve change: right after the response, the conversation shifts from angry pile-on potential to neutral or resolved. That’s your before/after, not a nice story someone tells in a retro.

Example 6: Reddit community feedback

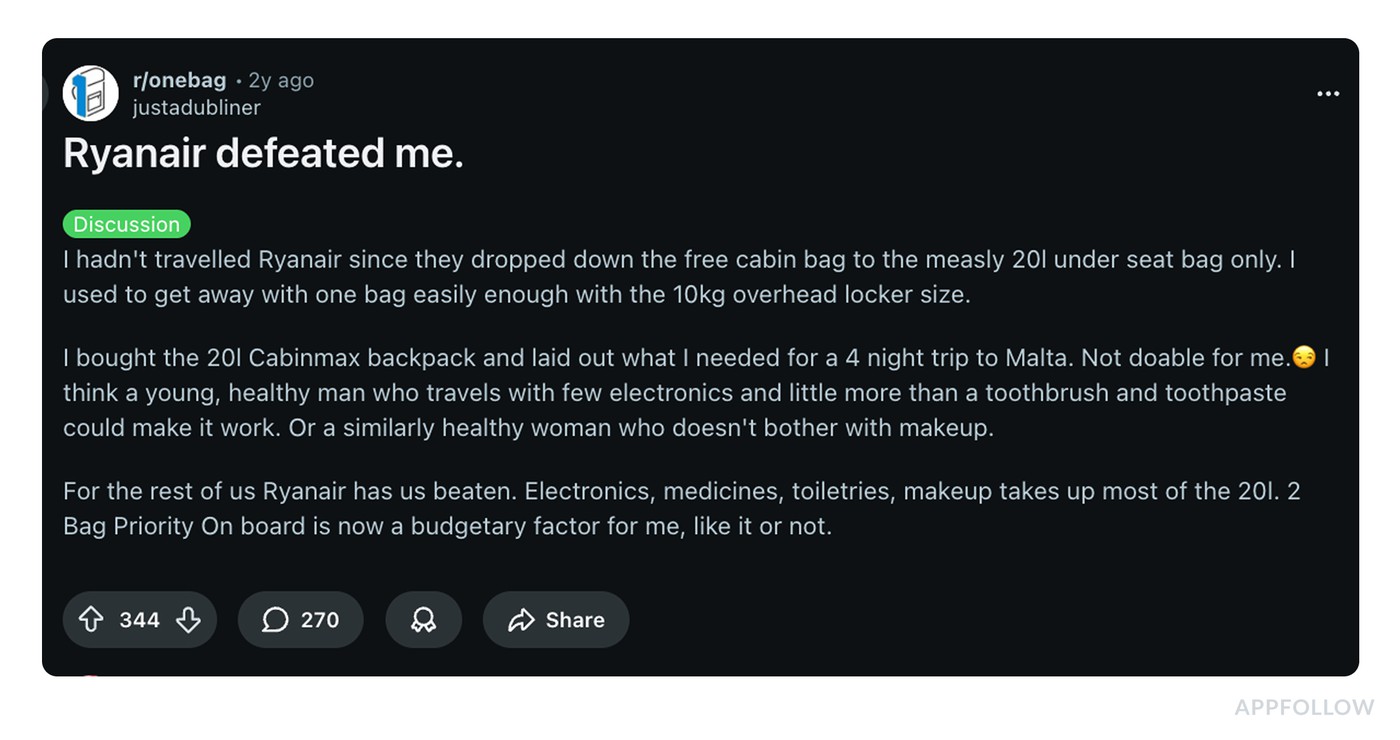

Reddit is where sentiment stops being a one-liner and turns into a whole story. Take this thread.

The headline “Ryanair defeated me” is really a long-form narrative about a policy change, an attempted workaround, and the final emotional outcome: resignation. Then the comments pile in with “here’s how I beat the system” hacks, jokes, and extra context.

This is a customer sentiment analysis example brands can’t handle by skimming. Support teams usually pull Reddit into a listening workflow using tools like Brandwatch, Talkwalker, Sprinklr, Meltwater, Sprout Social, plus native Reddit search and saved queries for brand + policy keywords (baggage size, “one bag,” “priority,” “overhead,” “fees”).

The goal is to read the thread as a cluster and measure what’s spreading.

What they extract from this kind of discussion:

- Theme: “I used to get value, now I feel forced into paying for priority.” That’s fairness sentiment, not a feature request.

- Emotion arc: frustration → bargaining (trying the 20L bag) → defeat/acceptance. That arc matters because it predicts behavior. People don’t rage-quit. They quietly pay the fee or quietly avoid you.

- Workarounds: commenters share hacks like “wear the bag differently,” “use a coat with deep pockets,” “hide behind disorganized passengers.” That’s not noise. It tells you customers are actively problem-solving around your constraints, which usually means the policy is painful but demand is still there.

- Who’s affected: the OP explicitly calls out that the rule is easier for “young, healthy” minimalists, harder for everyone carrying meds, toiletries, electronics. That’s segmentation insight you can use in messaging.

Then the action loop kicks in: CX logs this as a recurring “policy friction” topic, support updates macros and help-center copy with the exact confusion points people keep repeating, and product or Ops gets the signal; if the top workaround is “how to sneak a bigger bag,” your rule is generating adversarial behavior, which usually increases gate conflict and hurts staff experience too. Marketing adjusts expectations up front, because surprise is what turns “I paid” into “you defeated me.”

Customer support sentiment analysis example

Someone’s locked out, charged twice, panicking about data, or trying to cancel while they’re half-asleep on their phone.

That’s why a simple positive or negative label can be misleading.

- A ticket can start angry and end delighted in ten minutes.

- A chat can sound polite while the customer is clearly stuck.

- A call transcript can look neutral until you catch the same phrase repeated three times, which is usually frustration with a customer-service voice.

The job of customer support sentiment analysis is to track that emotional journey at scale and tie it to something actionable. Which issues create anxiety. Which workflows trigger rage. Which agents consistently de-escalate. Where a “resolved” ticket still leaves the customer annoyed, so churn risk stays high.

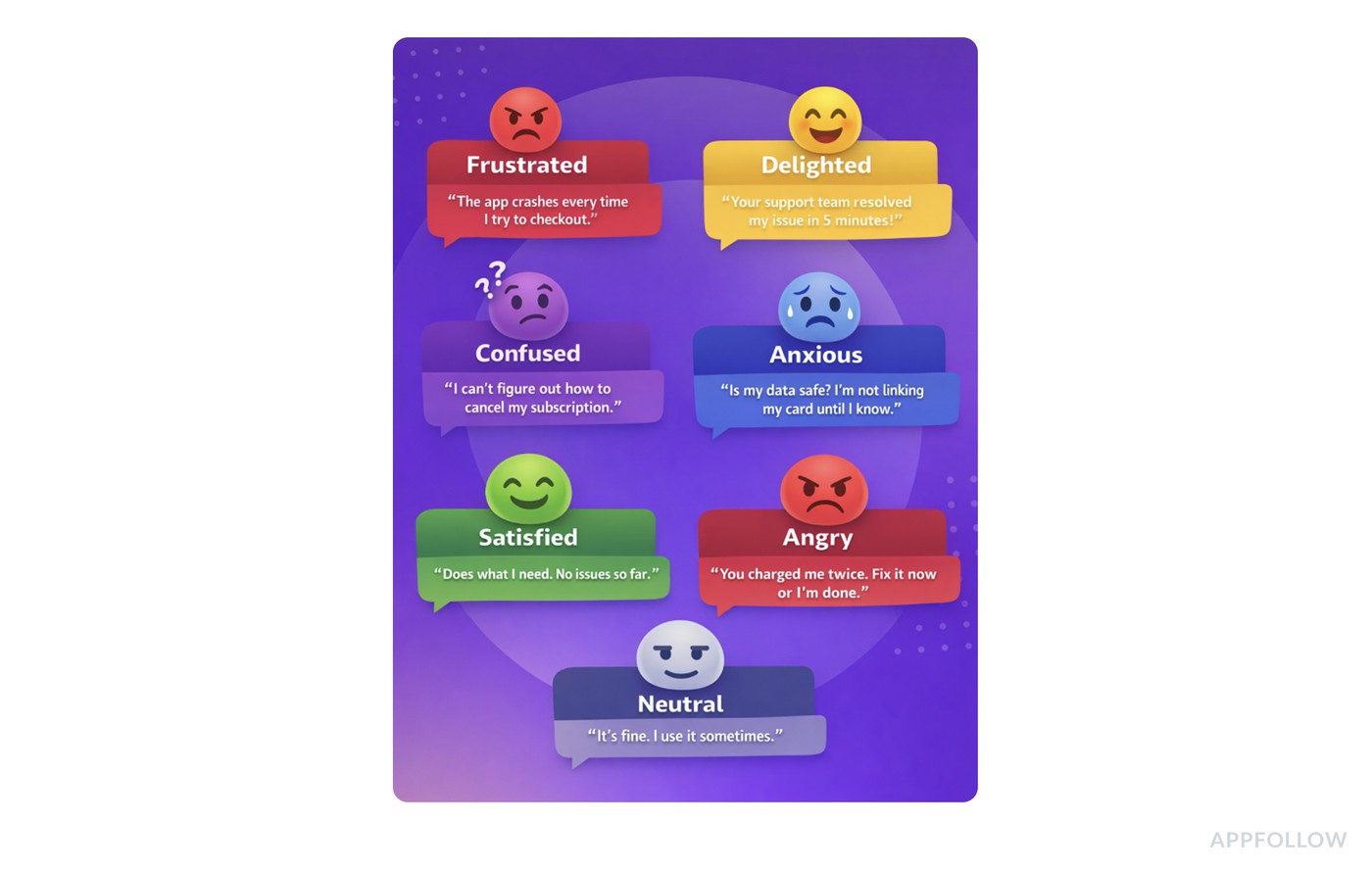

Example 7: Support ticket categorization

Support ticket sentiment isn’t about reading every message like a therapist. Brands don’t have time for that. The way it works is batch analysis. Pick a period, pull the tickets, let the system cluster what happened, then use the patterns to tighten your workflow.

Here’s a clean customer sentiment analysis example of what “urgent negative sentiment flagging” looks like in a real queue:

One ticket like that is annoying. Fifty in a week is a trend with revenue risk.

So support teams run categorization in tools they already live in, usually Zendesk, Intercom, Freshdesk, Salesforce Service Cloud, Help Scout, sometimes with analytics layers like Gong (for calls), Chorus, or a BI setup pulling ticket exports into a dashboard. The sentiment layer can come from built-in AI features, add-ons, or an NLP pipeline that scores text and tags it by intent.

The analysis flow is simple and fast:

- They pull a batch for the last 7 or 30 days, then categorize tickets by topic (billing, login, cancellation, bugs) and emotion (angry, anxious, frustrated, neutral).

- Next, they filter for “urgent + negative,” usually defined by language signals like ASAP, dispute, chargeback, cancel, lawsuit, scam, never again plus a low sentiment score.

That’s how they find the tickets that can turn into churn, refunds, and public complaints.

The insights are what you’d expect, but sharper because they’re quantified. Maybe 60% of urgent-negative tickets are billing-related and spike right after a pricing change. Maybe login issues have lower volume but higher urgency because they block access.

Sometimes you discover the biggest driver is lag. The sentiment tanks when the first response time crosses a threshold.

How do they use this knowledge?

- Billing gets a dedicated route and faster SLA. Login issues get a stricter escalation path and a “known issue” banner if it’s widespread.

- Agents get macros that de-escalate without sounding robotic.

- Product receives a weekly summary with the top negative clusters and example language customers keep repeating.

The point of doing it in batches is momentum. You’re not trying to psychoanalyze every ticket.

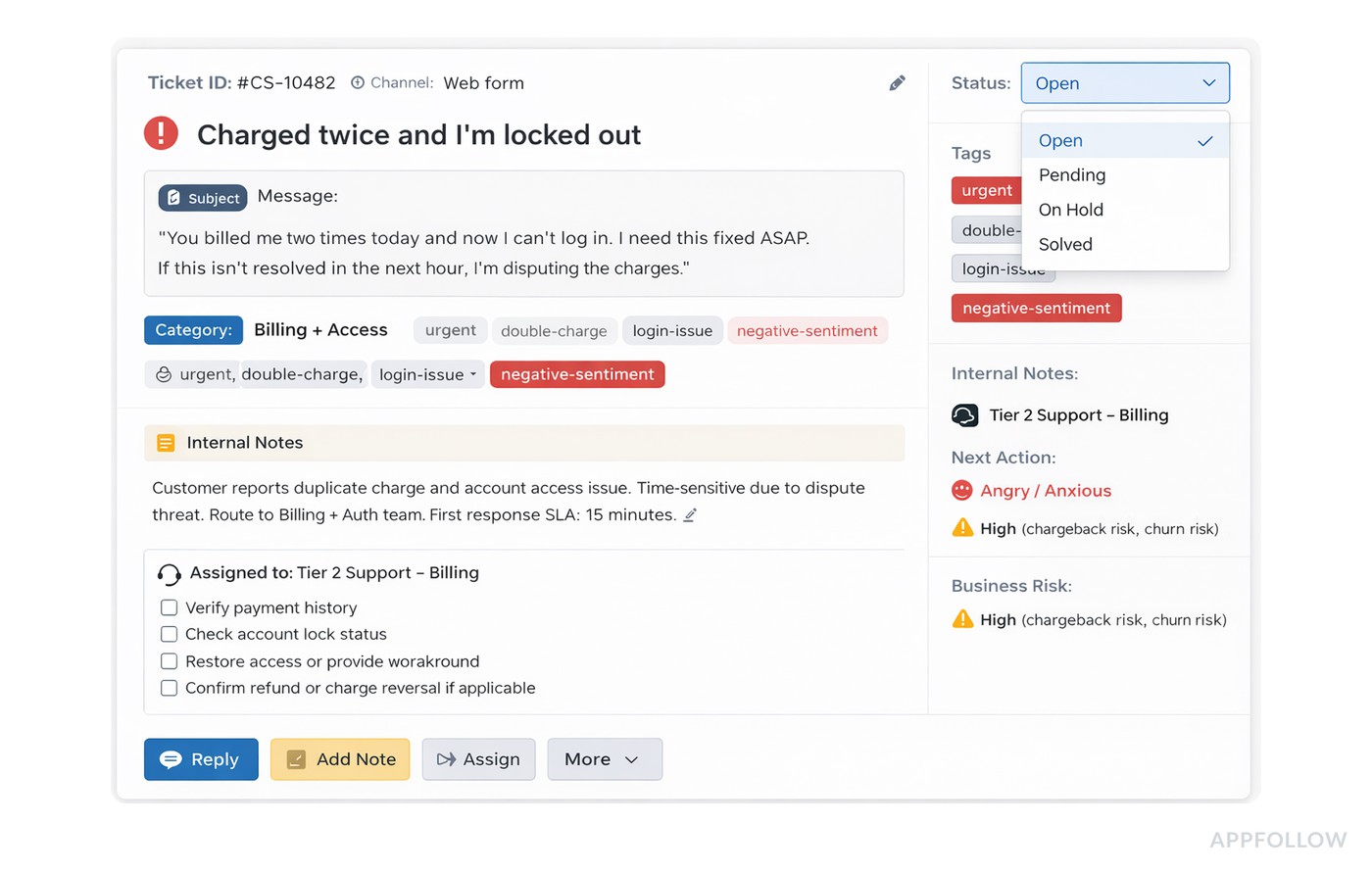

How to understand customer emotions

Before you label anything, decide what “emotion” means in your world. Keep it practical. An emotion wheel is great, but you don’t need 48 nuanced feelings to run a weekly feedback loop. Most teams do fine with a short set like: frustrated, delighted, confused, anxious, satisfied, angry, neutral.

Now map those to real signals across channels. Quick customer emotions examples you’ll recognize instantly:

When everyone uses the same labels, trends become real and routing becomes easy.

Determine emotions manual reading vs. system-assisted tagging

Most brands start by doing it the human way: read samples, agree on definitions, calibrate as a team. That’s still valuable because you catch context. Sarcasm. Cultural phrasing. The “I love it but…” reviews look positive until you see the blocker.

Then the scale problem hits. Hundreds of reviews, 40% in other languages, and suddenly your “weekly sentiment check” becomes a half-day meeting.

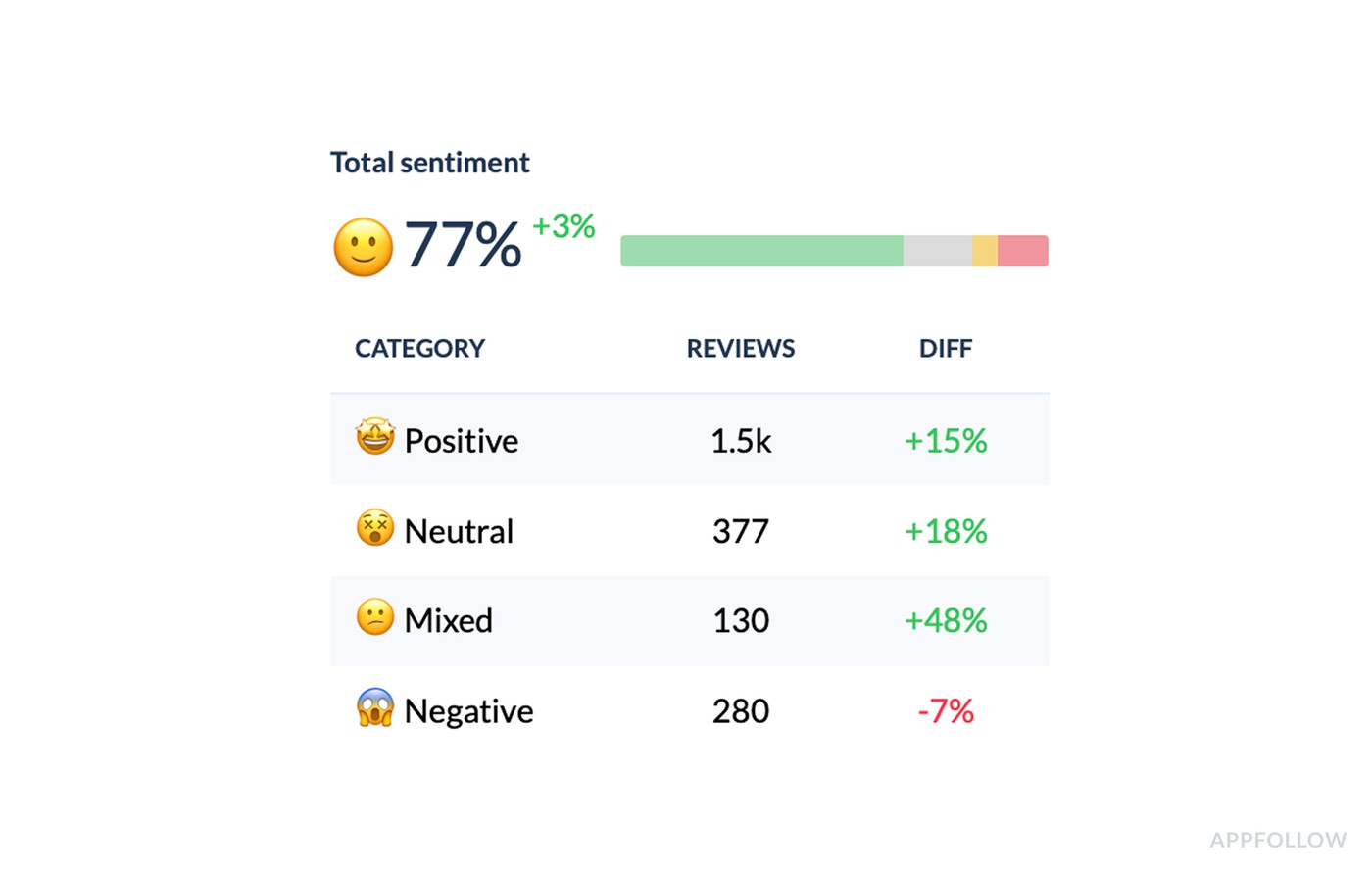

This is where systems help. Review management tools like AppFollow can surface emotion at a glance by showing it as an emoji plus a percentage score, so you can scan clusters without reading every line, then click into samples only where it’s worth it.

You still keep a human in the loop. You just stop doing the boring parts by hand.

If you’re dealing with App Store and Google Play reviews and want that emotion layer baked into the workflow, start a free trial in AppFollow and run your next review triage with actual trends, not gut feel.

cta_free_trial_purple

FAQs

What is an example of customer sentiment?

A simple customer sentiment examples moment is an app review that reads: “Love the lessons, but the ads are getting out of hand.” The sentiment isn’t “good” or “bad” in one word. It’s positive about value, negative about friction, and it tells you exactly what’s shaping the user’s mood.

What is an example of a sentiment analysis?

A solid customer sentiment analysis example is when you take 500 app reviews from the last 30 days, tag them by theme (pricing, crashes, onboarding), then measure how sentiment and star rating shift per tag. If “Doesn’t Work” is 23% of mentions and tracks the lowest sentiment, you’ve found the real driver behind the rating drop.

Can ChatGPT do sentiment analysis?

Yes, ChatGPT can help draft, classify, and summarize sentiment from text, but it’s best used as an assistant, not your single source of truth. For customer service sentiment analysis examples, it can label tickets as frustrated vs. anxious, flag urgency language, and cluster themes. In production, teams usually combine that with your support data, tagging rules, and QA checks to keep results consistent.

What is a real life example of sentiment analysis?

A real-world use case is social listening during a product change. You track posts and comments, then map emotions like frustrated, confused, or delighted to what people are reacting to. Those customer emotions examples become actionable when you can say, “Confusion spiked after the pricing update,” and then fix the messaging or flow.