Guessing what mobile app players want? Your reviews already told you.

Table of Content:

Your product team spent three hours in a meeting last week arguing about whether to fix the tutorial or add new content first. Someone suggested running a player survey, another person wanted to look at analytics. Meanwhile, 2,400 reviews came in during those three hours telling you exactly what's broken and what players want, but nobody read them.

Happens! cta_join_slack_purple

Developers have all the data they need sitting in their app store reviews but treat it like background noise instead of the product roadmap source that it is. The information is there, you're just not using it because reading thousands of reviews manually is impossible and spotting patterns without tools is guessing dressed up as analysis.

How can we do this better, you ask? Here’s how.

What your reviews are telling you

Game apps get a predictable mix of review types, and each category contains specific actionable information if you know how to extract it.

Bug reports and crash mentions are the most urgent. Players write things like "crashes every time I try to buy gems" or "freezes on level 15" or "won't load on my Samsung Galaxy S23." These reviews tell you what's broken, often with enough detail to reproduce the issue. When you see 50 reviews mentioning the same crash, that's not a coincidence, that's a critical bug affecting a chunk of your player base.

Feature requests...players want new characters, more levels, different game modes, quality of life improvements like skip buttons or faster animations. Some requests are realistic and others are wishful thinking, but patterns in feature requests reveal what would increase engagement and retention if you built it.

Monetization complaints cluster around pain points: things are too expensive, ads are too frequent, paywalls feel unfair, or there's nothing worth spending money on. The ratio of "too expensive" to "nothing to buy" complaints tells you if you're pushing too hard or not offering enough value. Reviews mentioning monetization from new players versus veterans reveal if your pricing strategy hits too early or feels reasonable after investment.

Difficulty and balance reviews surface when progression feels broken: too grindy, too easy, unfair mechanics, impossible levels. If 200 reviews mention level 47 being unreasonably hard, level 47 needs adjustment. If reviews say early game is boring but late game is great, your onboarding needs work.

Then there’s also positive feedback, the best kind of review. Players tell you what they love about your game, what keeps them playing, what makes it special compared to alternatives. What not to change and what to emphasize in your marketing. If players consistently praise your art style or core gameplay loop, don't mess with those things chasing trends.

For multiplayer games, matchmaking and connection problems generate review clusters. Lag complaints, unbalanced teams, long queue times, toxic players. These reviews often spike during specific hours or after infrastructure changes.

The challenge is volume…a successful game might see 1,000 to 5,000 reviews daily across all platforms and countries. Reading even 500 reviews per day takes hours and you still won't spot patterns reliably because human brains aren't built to process that much unstructured text.

When review spikes mean something broke

Spikes in review activity or sudden sentiment shifts signal that something changed and you need to figure out what.

A sudden increase in negative reviews right after an update means the update broke something. Maybe it introduced a new bug, made an existing problem worse, or changed something players liked. The faster you catch this spike, the faster you can identify what went wrong and push a fix.

Spikes in reviews mentioning keywords reveal focused problems. If "login error" appears in 5 reviews last week and 35 reviews this week, login broke recently. If "too expensive" mentions jump from 20 to 80 reviews after you adjusted pricing, players are rejecting the change. If crash mentions spike but only from Android users, you have a platform-specific bug.

Review spikes also happen after marketing campaigns, app store featuring, or content updates. These aren't necessarily bad, they just mean more people are playing and leaving feedback. The key is distinguishing between "more reviews because more players" and "more negative reviews because something is wrong."

The problem with manual monitoring is lag time. By the time someone on your team notices a review spike, reads enough reviews to understand what's happening, and escalates to the right people, days have passed. Your rating already dropped. Players already quit. The damage compounds while you're still figuring out what broke.

Only 10-30% of gamers write reviews, which means for every review you see, 3-10 players are experiencing the same issue who didn't bother writing about it. A spike of 50 negative reviews about crashes means 150-500 players hit that crash.

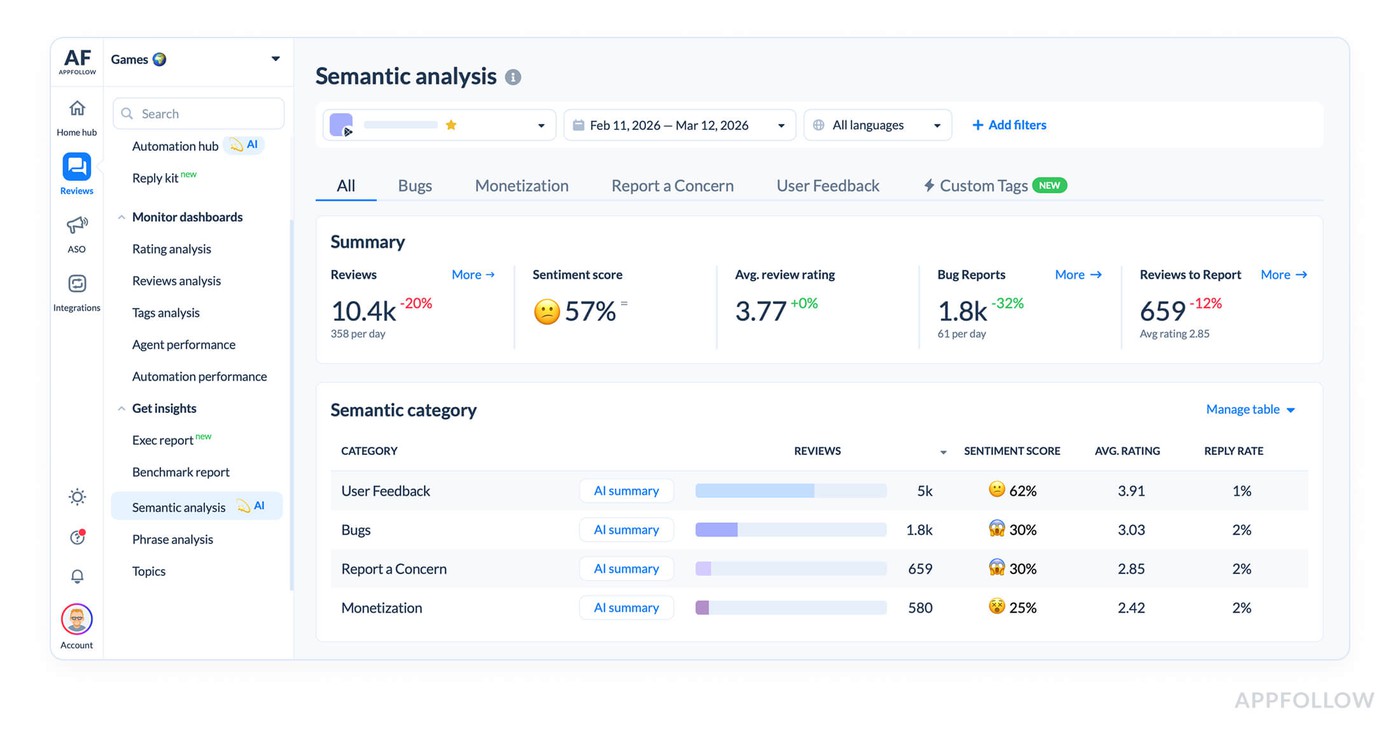

Why sentiment matters more than star ratings

A game sitting at 4.2 stars looks fine until you see that sentiment has been trending negative for two weeks, which means your rating is about to drop.

Sentiment tracking measures the ratio of positive to negative feelings expressed in reviews over time. You can have a stable 4-star rating while sentiment shifts from 70% positive to 50% positive, which predicts your rating will fall soon as the negative reviews accumulate.

A player might leave 3 stars with positive sentiment because they love the game but encountered a frustrating bug. Another player leaves 3 stars with negative sentiment because they hate the monetization. Both ratings look identical but they require completely different responses.

Review volume trends often correlate with major app releases or promotional activities. Tracking sentiment around these events tells you if your updates are landing well or making things worse. You push a new character and sentiment goes up, good.

Overall sentiment might be neutral but sentiment around monetization is very negative. That tells you where to focus. Or overall sentiment is positive but sentiment from new players is negative while veteran players love it, which means your onboarding is the problem not the core game.

Sentiment shifts also predict churn before it shows up in your retention metrics. Players complaining about grinding or paywalls are telling you they're about to quit. If you catch this in reviews and adjust before they leave, you save the retention.

The manual approach doesn't work

Some teams try to manage review analysis manually. They have someone read reviews in App Store Connect or Google Play Console, maybe keep a spreadsheet of common complaints, perhaps hold weekly meetings to discuss trends. This breaks down the moment you have any meaningful volume.

Native store consoles show you reviews but don't help you analyze them. You can sort by rating and read your 1-star reviews, but you can't easily spot that 40 of them mention the same bug unless you read all 40 and remember the details. There's no sentiment tracking, no automatic categorization, no way to see if complaint volumes are increasing or decreasing over time.

Copying reviews into a spreadsheet and manually tagging them works fine for 50 reviews. At 500 reviews per day you've created a full-time job that still doesn't give you real-time insights. By the time you've tagged Monday's reviews it's Wednesday and you've missed two days of potentially critical feedback.

Humans are bad at spotting trends in large datasets. You might remember that several reviews mentioned crashes but you won't reliably remember that crash mentions increased 300% this week versus last week, or that they cluster on devices, or that they started right after the update you pushed Tuesday.

You need automation and AI to handle this at scale, and not because automation is fancy but because the alternative is either hiring a team of people to read reviews full-time or accepting that you're flying blind on player feedback.

How AppFollow handles reviews at scale

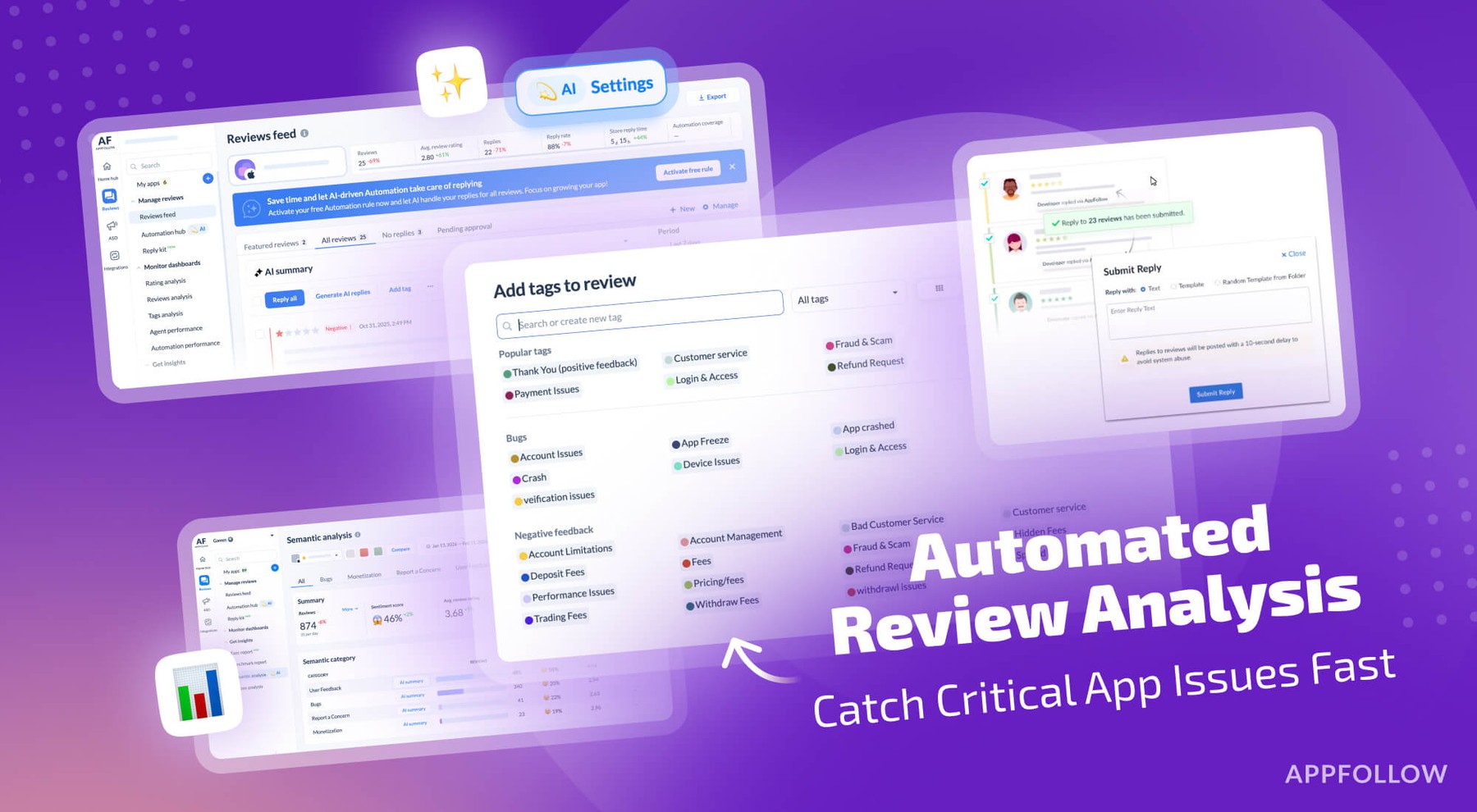

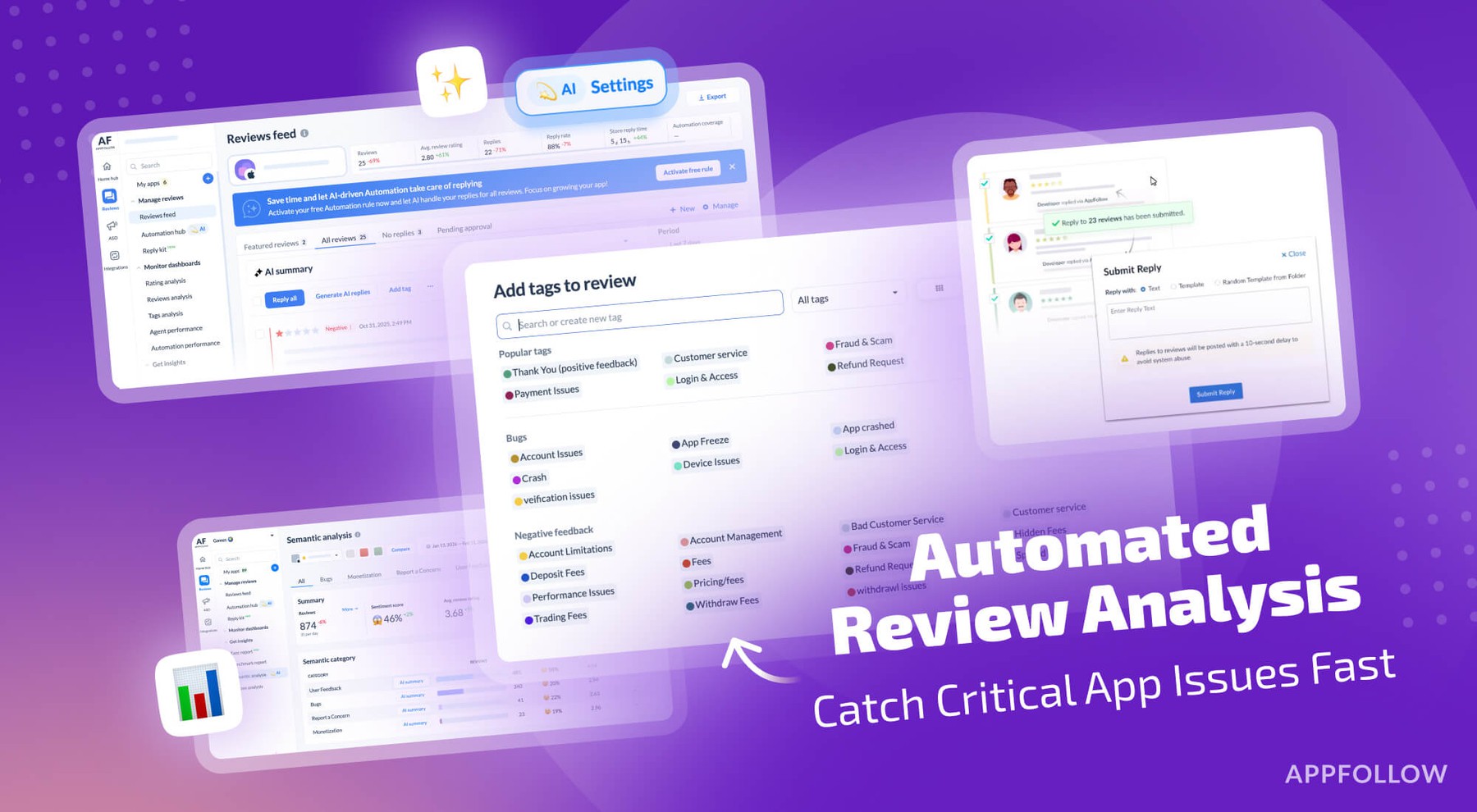

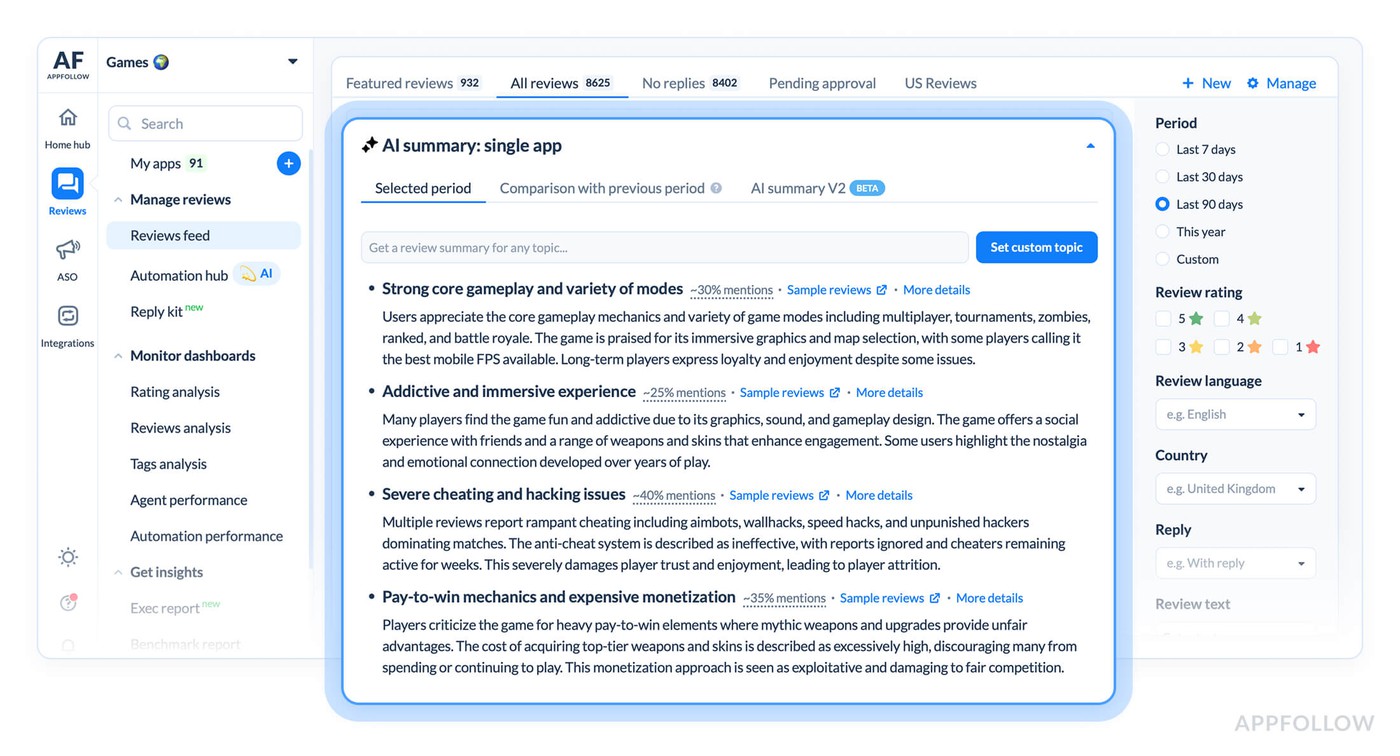

AppFollow solves the volume and pattern recognition problems with a combination of automatic categorization, AI analysis, and alerting that surfaces problems before they blow up.

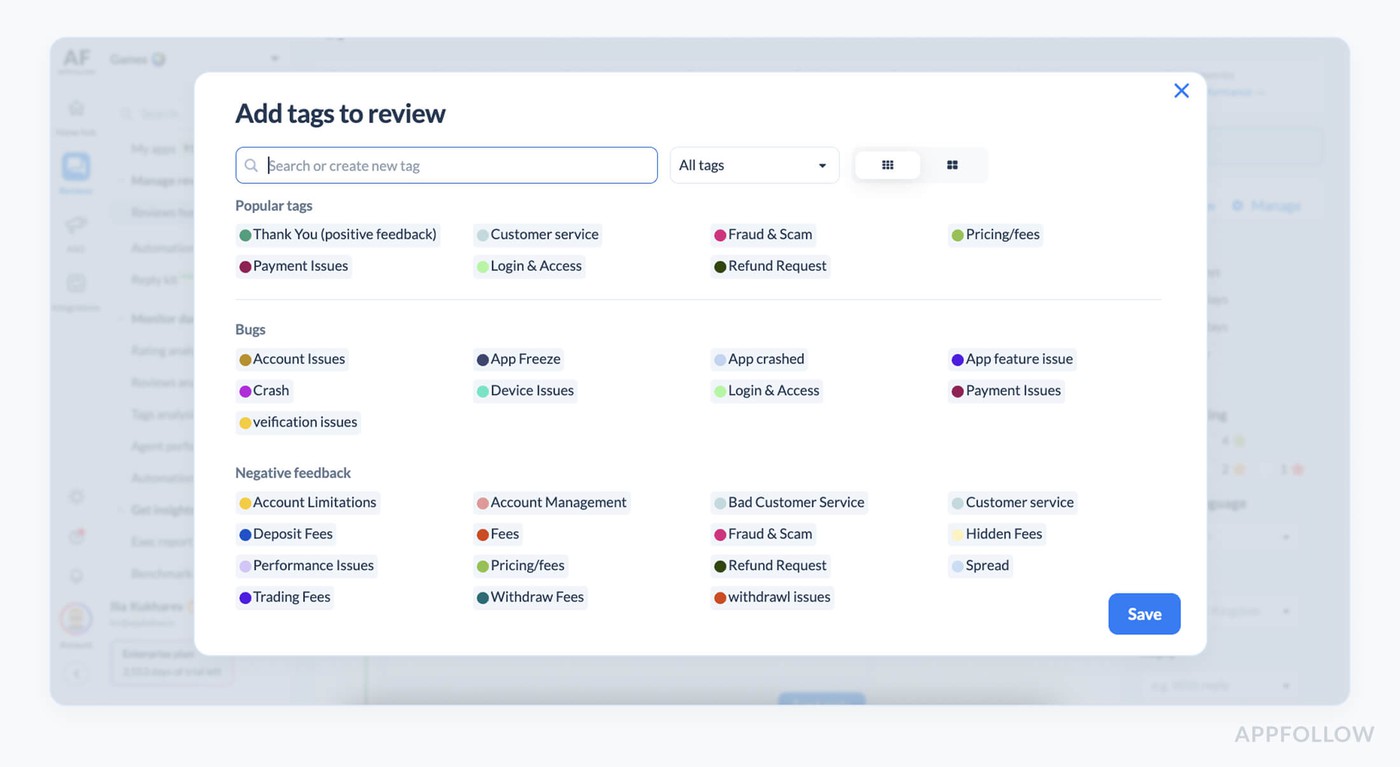

Auto-tags categorize reviews automatically based on content. You set up tags for bugs, crashes, payment issues, feature requests, balance complaints, monetization problems, positive feedback, and any game-specific categories that matter. Every review that comes in gets tagged automatically so you can filter and count without reading everything.

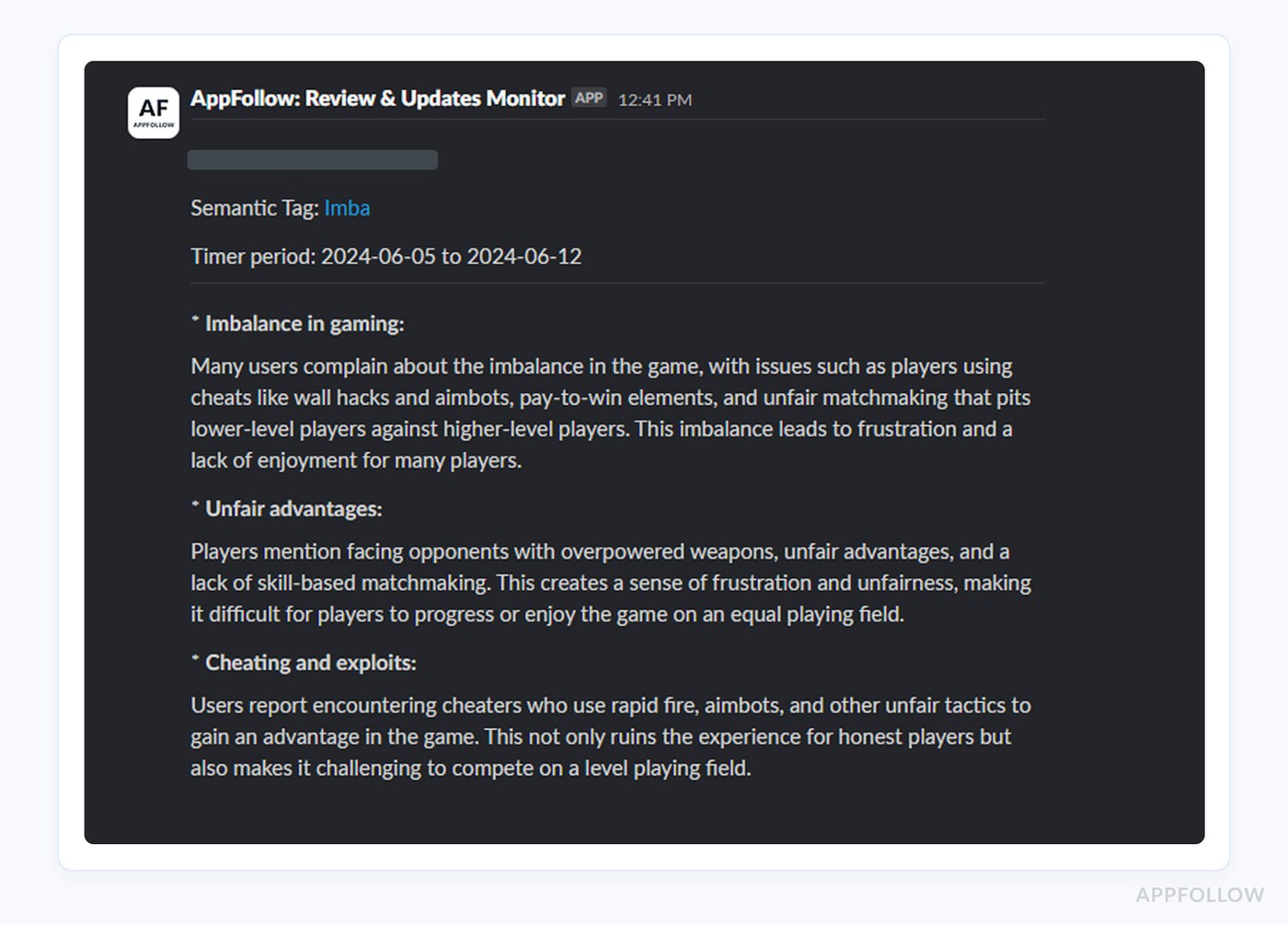

Gaming-specific tags, such as monetization complaints, difficulty issues, matchmaking problems, content requests, are the deal here. When you want to know how many people complained about difficulty this week, you filter by the difficulty tag and see the count. When you want to know if crash mentions are increasing, you compare crash-tagged reviews week over week.

The real value comes from cross-referencing tags with other data. Reviews tagged "crash" that also mention Android 13 tell you about a device-specific bug. Reviews tagged "too expensive" from new players mean your monetization hits too early. Reviews tagged "boring" after level 20 mean your mid-game content needs work.

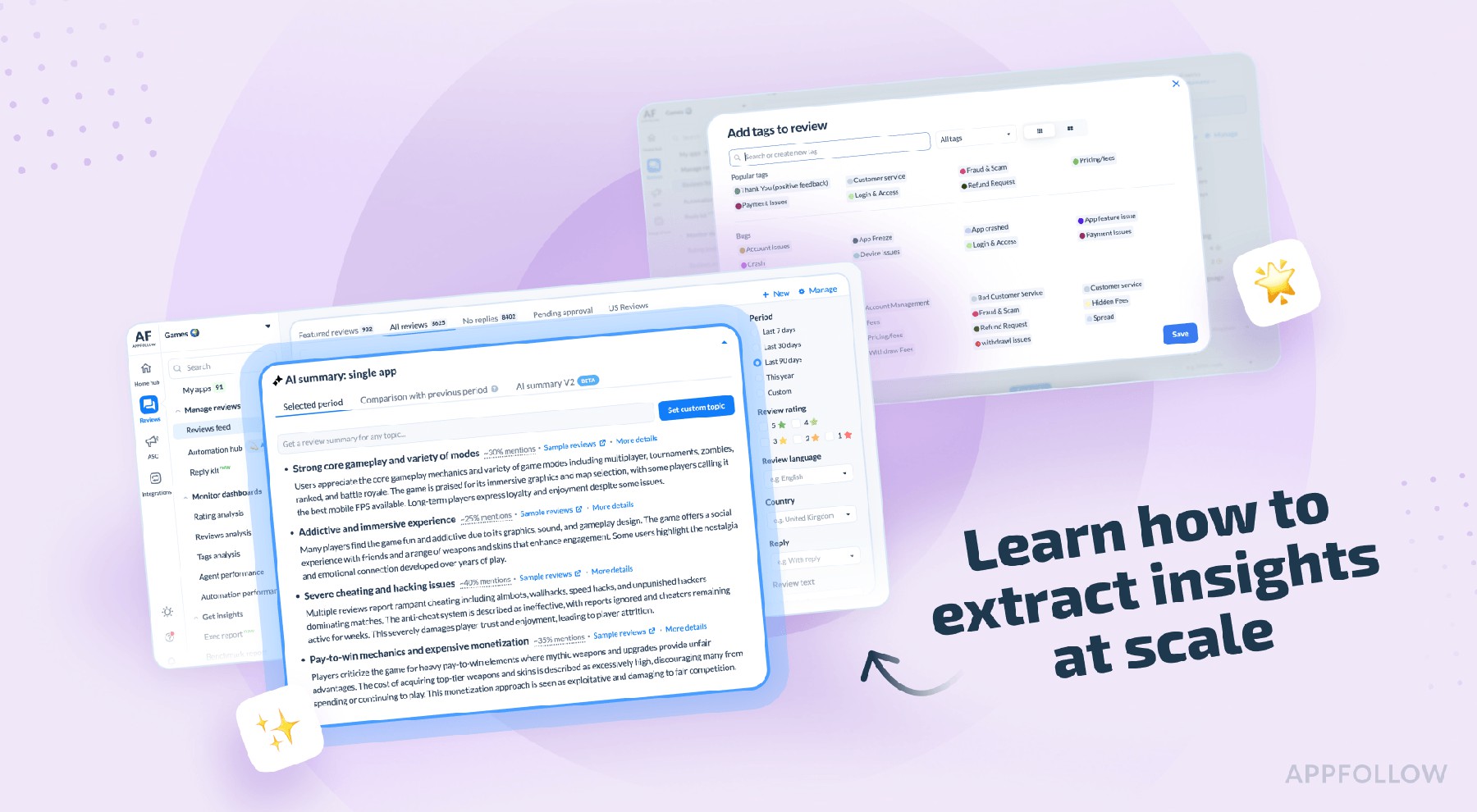

AI summaries let you understand hundreds of reviews in seconds instead of hours. You pull up the last 200 reviews and generate an AI summary that tells you the main themes: 60 reviews mention checkout failures, 45 mention energy system complaints, 30 praise new characters, 25 report lag issues. You know what's happening without reading 200 individual reviews.

This matters during review spikes. When negative reviews jump from 50 to 200 overnight, you don't have time to read 200 reviews to understand what broke. You generate an AI summary, see that 120 of them mention the same login bug, escalate to engineering immediately.

Semantic analysis understands context and meaning beyond simple keyword matching. It catches the difference between "this game is killer" and "this game kills my battery in 20 minutes." Both contain the word "kill" but semantic analysis knows one is positive and one is negative, so your automation doesn't misclassify them.

Semantic analysis also spots emerging problems before they become obvious. If reviews start using phrases like "feels unfair" or "forced to pay" more frequently, even without explicitly saying "too expensive," the system flags increasing monetization frustration. You catch the trend before it turns into a rating disaster.

Alerts notify you when something needs attention. You set thresholds for things that matter: crash-tagged reviews above 15 per hour, rating drops more than 0.2 stars in 24 hours, negative reviews in Brazil spiking above baseline. When the alert fires, you investigate immediately instead of discovering the problem three days later during your weekly review meeting.

The alerts connect to Slack or email so your team sees problems in real time. Engineering gets notified about technical issues. Product team gets notified about feature request trends. Support gets notified about sentiment drops requiring response focus.

Reply effect tracking shows you if responding to complaints helps. When you respond to a 1-star crash report and the player updates it to 4 stars after you fix the bug, that's positive reply effect. If you're responding to monetization complaints but nobody updates their reviews, either your responses aren't helping or you're not fixing the underlying problem. The metric tells you if your review management is working.

What to do with the insights

Having the data doesn't help if you don't act on it. The point of analyzing reviews at scale is making better decisions faster about what to build, what to fix, and what to leave alone.

Monitor bug reports by severity and frequency. A bug mentioned in 50 reviews needs immediate attention. A weird edge case mentioned twice can wait. Route bug reports tagged by auto-tags directly to your QA team with priority assigned by volume. They fix the issues affecting hundreds of players before they fix the issue affecting three players.

Share feature request summaries with your product team monthly. Pull all feature-request-tagged reviews, generate an AI summary, and see what players want most. If 300 reviews ask for a feature and only 20 ask for another feature, that tells you where demand is stronger. You still make the final call on what to build but at least you're making it with data instead of assumptions.

Track sentiment after updates to know if changes land well. You adjust monetization and check if reviews mentioning pricing get more positive or more negative. You redesign the tutorial and see if reviews from new players improve. If sentiment doesn't improve or gets worse, you know the change didn't work and you can iterate or revert.

Use benchmark data to understand if your problems are worse than the market. If your game has 15% of reviews mentioning ads negatively and competitors average 25%, you're doing better than the market. If you're at 40%, you have a problem that needs fixing. Benchmarking prevents you from chasing problems that aren't problems or ignoring issues that are killing you relative to alternatives.

Cross-reference review insights with analytics to understand cause and effect. Reviews say level 30 is too hard, analytics show retention drops at level 30. The reviews told you why retention is dropping. Reviews say new players get confused, analytics show day-1 retention is terrible. Reviews explained the analytics.

Export tagged reviews for detailed analysis when you need it. Maybe you're redesigning monetization and want to read every monetization-complaint-tagged review from the last quarter to understand pain points. The tags let you pull exactly those reviews without manually searching through thousands of unrelated feedback.

The business case for caring

Reviews that sit unanalyzed represent leaked revenue from problems you could have fixed and opportunities you could have captured.

When your game drops from 4.5 stars to 3.8 stars because you didn't catch a critical bug fast enough, organic downloads fall. Every day you sit at 3.8 stars costs you potential players who would have downloaded at 4.5 stars.

Retention problems show up in reviews before they show up in your analytics. Players tell you they're frustrated with grinding or paywalls before they quit. If you catch this feedback and adjust, you save some of those players.

Building the features that 500 reviews asked for is a safer bet than building features nobody mentioned because at least you know 500 players want it. Won't guarantee success but it improves your odds versus purely guessing.

If 300 reviews praise your art style and you're considering a visual overhaul, maybe reconsider. If reviews consistently say your core loop is addictive, don't change the core loop to copy a competitor's mechanics.

So instead of three-hour meetings debating what players want, you pull review summaries and see what players said they want. Decisions get faster and more confident because they're based on feedback from thousands of players instead of the loudest person in the meeting.

Stop guessing and start listening

Every game developer wants to know what players think. Most of them already have thousands of data points telling them exactly that, they just don't have systems to process the data into insights.

Your reviews contain your product roadmap. They tell you what's broken, what's working, what features players want, what monetization feels fair, and what changes would improve retention. The information is sitting there waiting for you to extract it.

The choice is between continuing to guess what players want based on intuition and internal debates, or setting up systems that surface player feedback automatically at whatever scale you're operating. One approach is hoping you're right. The other is knowing what players told you and deciding what to do with that information.

FAQs

What types of feedback do game developers get in app store reviews?

Game reviews typically fall into several categories: bug reports and crashes, feature requests, monetization complaints, difficulty and balance issues, positive feedback about what's working, matchmaking problems for multiplayer games, and content requests. Bug reports tell you what's broken with enough detail to reproduce issues. Feature requests reveal what would increase engagement. Monetization complaints show whether pricing feels fair or pushy. Successful games can receive 1,000 to 5,000 reviews daily, making manual analysis impossible without automation tools.

How do review spikes indicate problems with your game?

Sudden increases in negative reviews after updates signal that something broke. Spikes in keywords like "login error" or "crashes" reveal focused problems that need immediate attention. If crash mentions jump from 5 reviews to 35 reviews in one week, something broke recently. Review spikes also happen after marketing campaigns or app store featuring, but the key is distinguishing normal volume increases from genuine problems. Since only 10-30% of gamers write reviews according to AppFollow data, a spike of 50 negative reviews means 150-500 players experienced the same issue.

Why is sentiment analysis more useful than star ratings for games?

Star ratings provide a number but sentiment analysis reveals how players feel and predicts rating changes before they happen. A game at 4.2 stars might look stable while sentiment trends negative, predicting an upcoming rating drop. Sentiment tracking measures positive versus negative feelings over time, catching problems early enough to fix them. Two 3-star reviews can have completely different sentiment: one positive from a player who loves the game but hit a bug, another negative from someone who hates monetization. Cross-referencing sentiment with topics like monetization or difficulty makes feedback more actionable.

How does AppFollow help developers analyze app store reviews at scale?

AppFollow uses auto-tags to categorize reviews by type (bugs, features, monetization complaints) automatically, letting developers filter and count feedback without reading everything. AI summaries condense hundreds of reviews into key themes within seconds. Semantic analysis understands context to distinguish between "killer game" and "kills my battery." Alert systems notify teams when crash mentions spike or ratings drop beyond thresholds. Reply effect tracking shows whether responses improve ratings. A bug mentioned in 50 reviews needs immediate attention according to AppFollow data, while edge cases mentioned twice can wait, helping teams prioritize fixes that affect the most players.