Measuring Your Support Team’s Success: How to Set Up And Analyse Your KPIs

We're getting to the most interesting part of our academy, centered around measuring your Support team’s results. In the first part of the academy, we mentioned certain crucial metrics – Average Rating, Average Reviews Rating, Average New Rating, and Reply Effect. But there are additional important metrics as well, such as CSAT, Reply Rate, Reply Time. All of these metrics can be KPIs for your team at different stages of the work with the feedback process. Let's dive into the measurement process, starting with a question from an AppFollow user:

As usual, it's worth mentioning that your Support team’s KPIs will vary at different stages of product development. If you have a small number of incoming reviews, it’s easier to offer each user a personal response, and one of the main KPIs in this situation might be a high (90%+) Reply Rate and fast Reply Time (1-3 hours). When you have more reviews coming your way, the Reply Rate can drop. Your focus could then shift to semantic analysis of reviews and identifying growth areas for the product. However, let's look at the Support team's performance through the lens of top-level results – specifically the impact on the average app rating.

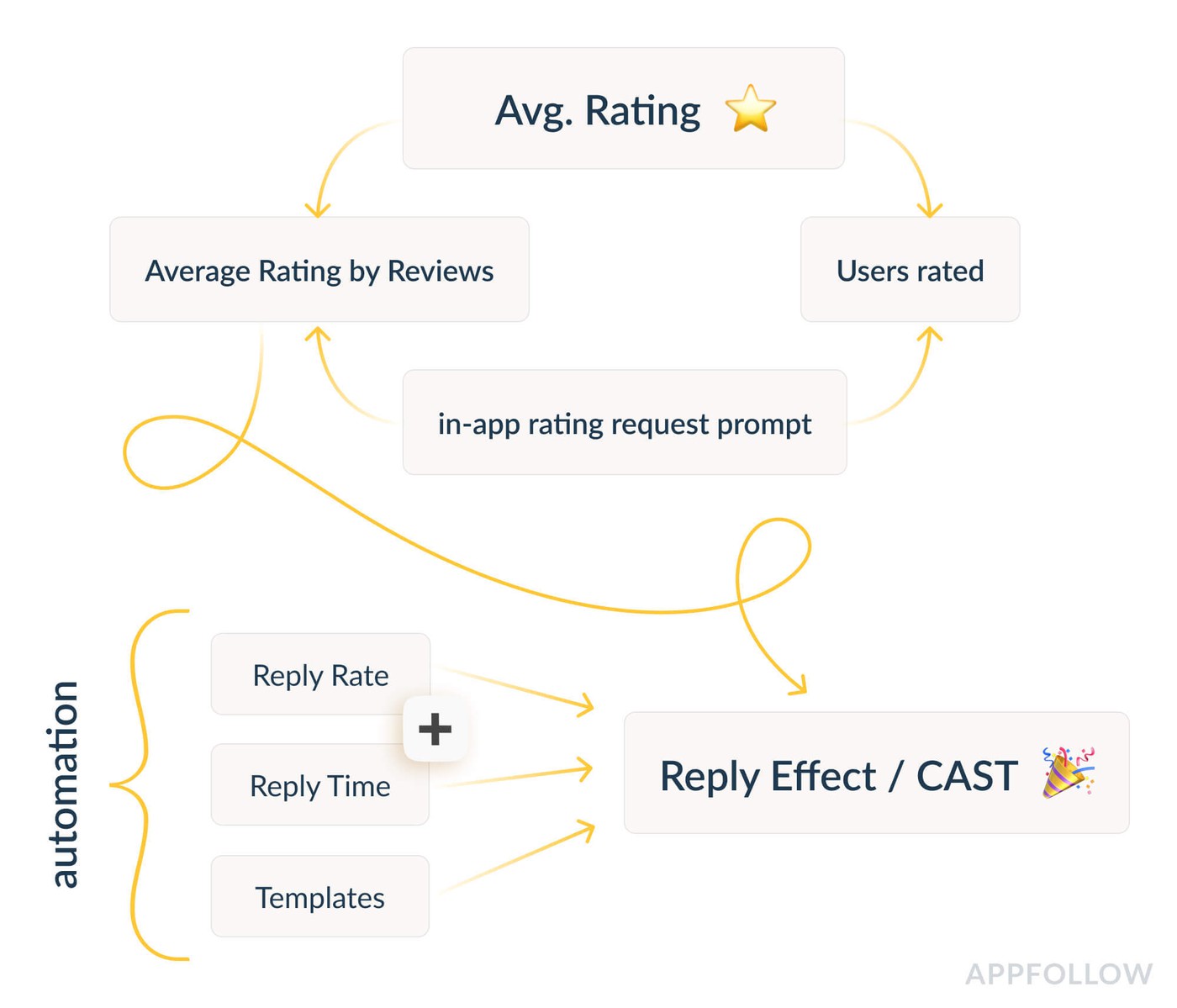

Breakdown of average app rating: Taking a quantity and quality approach to metrics

In the picture above, we've laid out the components and intermediate metrics for working with reviews. We start with the premise that your average rating consists of all incoming reviews. At AppFollow, we distinguish the average rating by reviews with text (average rating by reviews) and the total number of incoming reviews (user rated). Both of these metrics are directly influenced by when and where you decide to implement your in-app rating request prompt - for example, just after a user has finished a certain level, or once they’ve watched a certain amount of videos. When experimenting with in-app rating requests, you can measure the results based on a quantity and quality approach.

Working with in-app rating request prompts

To begin with, both app stores offer their own native solution for implementing this prompt. Using your own custom-designed rating request is prohibited by the guidelines and can lead to serious consequences – up to removal of your app from the app store. To learn more about the native API method for the App Store, follow this link; similar information for Google Play can be found here.

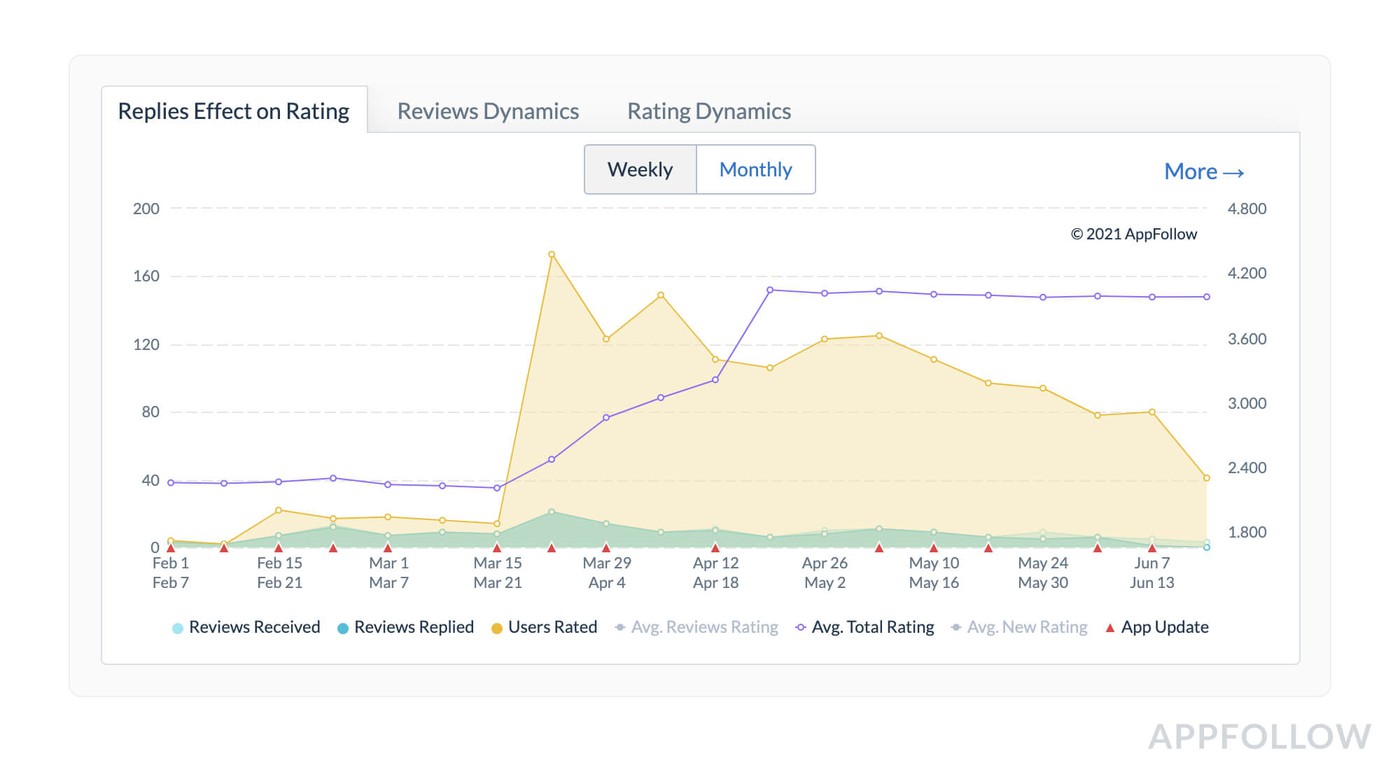

Below, you can see an example of a successful experiment with an in-app rating request. During the update week happening during 03/15 – 03/21, the developer implemented a native “Rate us” window, having pre-selected the right cohort of users and the time frame for the prompt to appear. The number of ratings increased instantly (users rated metric), and the result is impressive: +800% reviews (from 20 ratings/day on average to a peak of 165 ratings/day). The developer’s first quantity-driven goal – to drive app reviews – is a big success.

Now, let's take a look at the dynamics of the average total rating. This grew from 2.4 stars to 3.9 in less than a month - a huge result. Our quality-driven target has also been successfully achieved.

Not all cases will be as successful on the first go - the example above highlights how well the developers know their user base. When deciding the right time and place to prompt the rating pop-up, we highly recommend analyzing your customer journey map and taking into account the specifics of each app store.

The driver behind these experiments can be different teams, whether Marketing, Support, or the Product team. And don’t be discouraged if your results fall flat at first: it’s unlikely that you will find the best solution straightaway. You will have to try a lot of variants, so it’s all a matter of testing, trial, and error.

We trust that you have discovered valuable insights that you can implement in your mobile application. If you're interested in learning more about how AppFollow can enhance your review management procedure, feel free to contact us for a demonstration here.

Wishing you success in your development,

Your AppFollow Team.